The game theory of cooperation

Explained simply

In this series of posts, I am offering a deep explanation of what human morality is. I started this series by pushing back on many popular views about morality, such as the idea that it comes from religion or that there are absolute moral truths “out there”. Instead, I have argued that morality is fundamentally a human affair. It is how we resolve conflicts and organise social cooperation. From that perspective, game theory, particularly as developed in the work of Ken Binmore, provides a framework that explains morality. In this post and the next, I present the core insights we get from this approach about cooperation, why it exists, and how it works.

There are few thinkers whose writing remains relevant over time. Darwin is one of them. As our understanding of human behaviour progresses, it is surprising to see how much he got right about it. Most people associate Darwin with the view of evolution as being a fight of all against all. However, in his second book dedicated to humans, The Descent of Man, he presents a very insightful understanding of human psychology, which gives its full place to cooperative behaviour. Of particular interest is the fact that he describes the good reasons why cooperation may exist and why people tend to be willing to cooperate with others:

[A]s the reasoning powers and foresight of the members became improved, each man would soon learn from experience that if he aided his fellow-men, he would commonly receive aid in return. From this low motive he might acquire the habit of aiding his fellows; and the habit of performing benevolent actions certainly strengthens the feeling of sympathy, which gives the first impulse to benevolent actions. Habits, moreover, followed during many generations probably tend to be inherited. — Darwin

Darwin provided two answers. The first one was to stress the self-regarding consequences of altruistic actions: helping others would increase the likelihood of aid to oneself in times of need. The second one was to stress the importance to the individual of the praise and blame of neighbours in stimulating virtuous behaviour. Strikingly, these two explanations—unified in the Folk Theorem—form the backbone of game theorists’ and evolutionary biologists’ modern understanding of the roots of cooperation.

The Folk Theorem

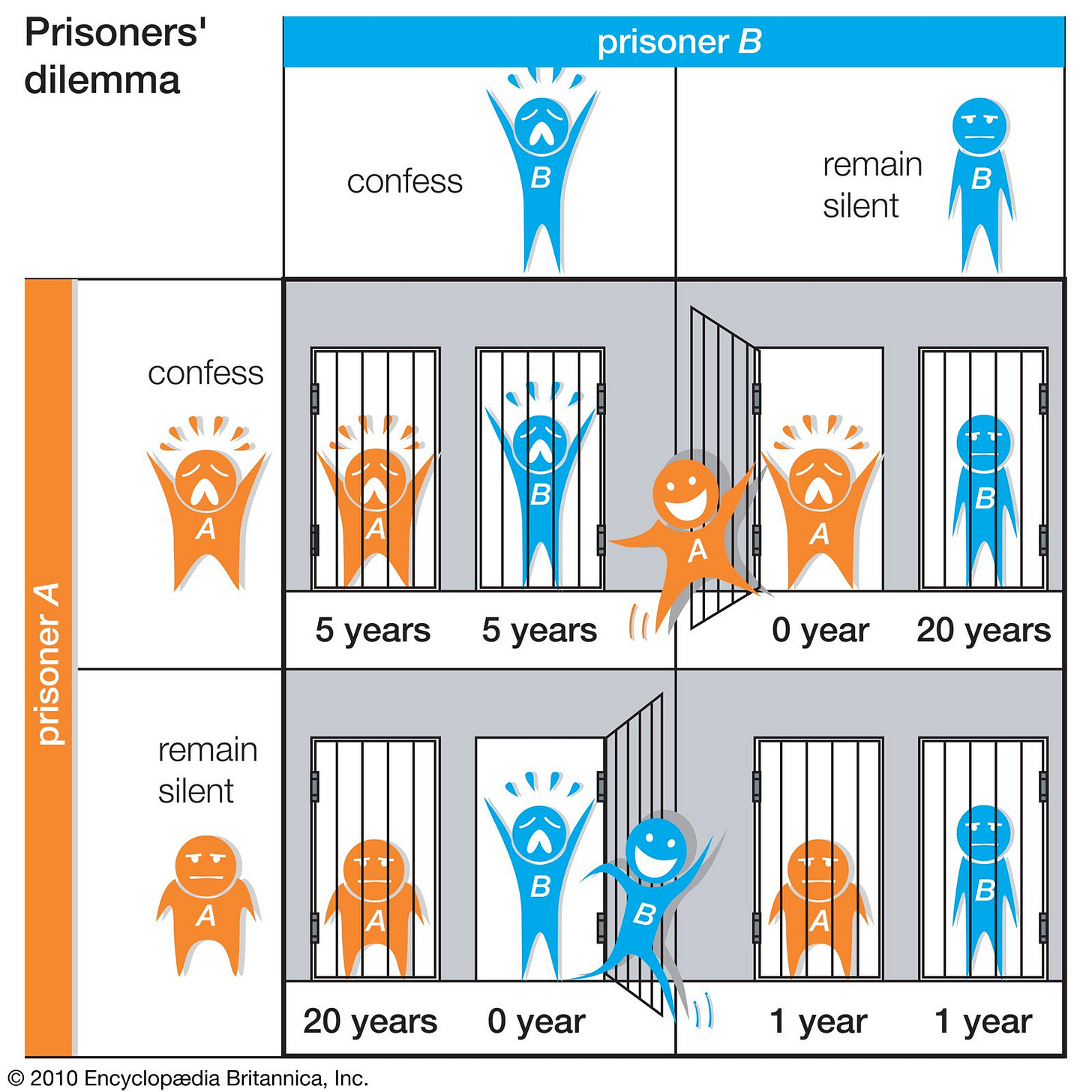

The Prisoner’s Dilemma is one of the most famous games from game theory. In it, two prisoners are presented with opportunities to “rat” out the other to get a better deal from the police. They can choose to stay silent (cooperate with each other) or to talk (defect). The paradox of the Prisoner’s Dilemma is that both players have an individual interest in defecting. But if they follow their individual interest and both defect, then they end up in a situation where they are worse off than if they had both stayed silent. This is somewhat frustrating, and that is the reason it is a “social dilemma”. The more general lesson is that there are situations such that when everybody follows their individual interest, everybody ends up worse off than if they had cooperated.

Some quick thinking lets us realise that these kinds of situations are actually not that infrequent. Consider, in everyday life, a collective project whose outcome depends on people in a group working hard on it. Everybody wants the project to be successful, but for a given participant, it might be more convenient not to work too hard and piggyback on the work of others. Such incentives can lead group work to fail with not enough people pulling their weight for the project to be successful.

The result of players’ rational behaviour in a Prisoner’s Dilemma is a Nash equilibrium. It is a situation where, when all players anticipate a pattern of play from all players, none of them has an interest in changing strategy. In the group example, if group participants expect that others won’t work, they have no reason to work themselves. So the failure to get the group working is stable in the sense that everyone’s actions are compatible with their expectations about what others will do. But note that the fully cooperative situation where everybody works in the group might not be stable because if everybody works, some might consider that they might as well shirk and benefit from the fruits of the collective work nonetheless.

The Prisoner’s Dilemma was first studied in this form by Merrill Flood and Melvin Dresher, two analysts at the RAND Corporation.1 In early 1950, they invited two rigorous thinkers, Armen Alchian (an economist at UCLA) and John D. Williams (a RAND mathematician), to play 100 rounds of the game. Interestingly, the two did not simply play the one-shot Nash prediction (mutual defection) round after round. Instead, they experimented for a while, with Williams trying to induce Alchian to cooperate, and overall mutual defection was relatively rare.

Flood and Dresher asked John Nash what to make of this. He answered that the observed behaviour and the attempt at cooperating should not be seen as abnormal. The “100 rounds” should be treated as one big game, not as 100 separate one-shot games. In that setting, a strategy can include rules about how to react to what the other player has done. Conditional strategies of the form “do this if the other did that” can be an equilibrium of this larger game.2

Very early in the history of game theory, it became understood that repeated interactions create the opportunity to sustain cooperation. In their 1957 classic book on game theory, Luce and Raiffa described in this way the possibility of cooperation in the repeated Prisoner’s Dilemma (emphases mine):

[W]e see that in the repeated game the repeated selection of (cooperate, cooperate) is in a sort of quasi-equilibrium: it is not to the advantage of either player to initiate the chaos that results from not conforming, even though the non-conforming strategy is profitable in the short run (one trial).

It is intuitively clear that this quasi-equilibrium pair is extremely unstable; any loss of “faith” in one’s opponent sets up the chain which leads to loss for both players. — Luce and Raiffa (1957)3

This understanding was so widespread that the formal result, first published by Aumann (1959), was later called the Folk Theorem.

The folk theorem explained intuitively

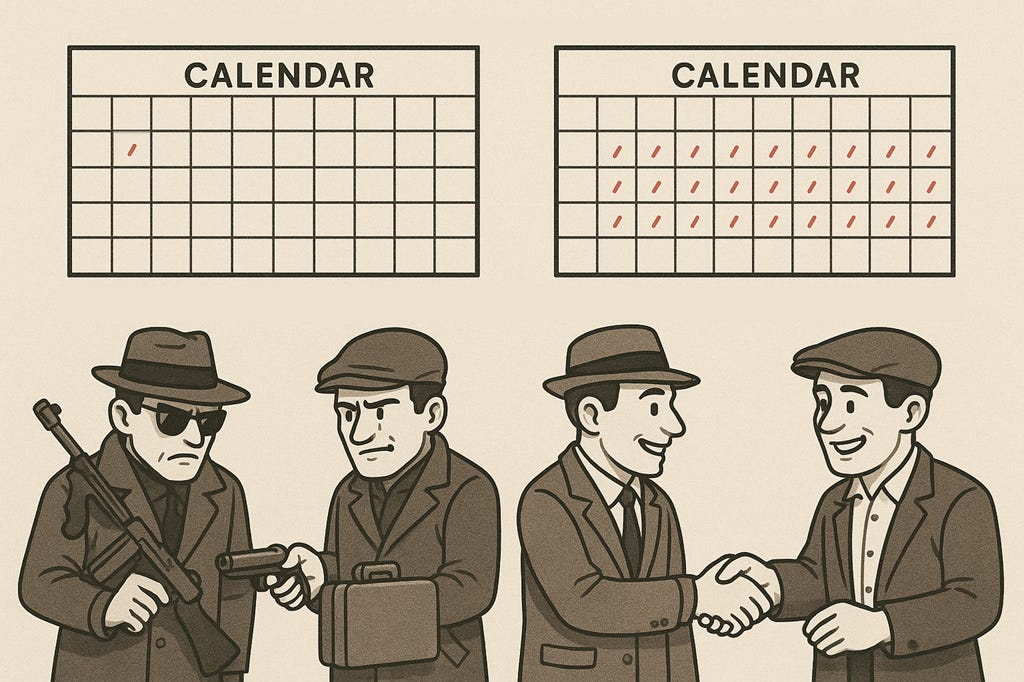

The intuition of the folk theorem is very easy to understand. Imagine two gangsters who plan an exchange of money and drugs. They would have no qualms about robbing each other. If they have to organise a one-off transaction, they face a Prisoner’s Dilemma. Both would be happy if the transaction happens as planned, but each would be even better off if they could get the other gangster’s property without having to give theirs. Gangster movies are replete with scenes where such exchanges end up in betrayal, and one party ends up dead.

However, the strategic nature of this interaction changes radically if they have the opportunity to interact regularly over time (e.g. in a drug deal where one brings money and the other the substance). They can engage in regulated cooperation, respect rules, and benefit from these interactions.

The reason is that the prospect of possible future cooperation has a policing effect on the present. The “shadow of the future” is cast over present interactions.4 For fear of losing the gains from future cooperation, both gangsters may end up cooperating in each period.

The folk theorem with minimal maths

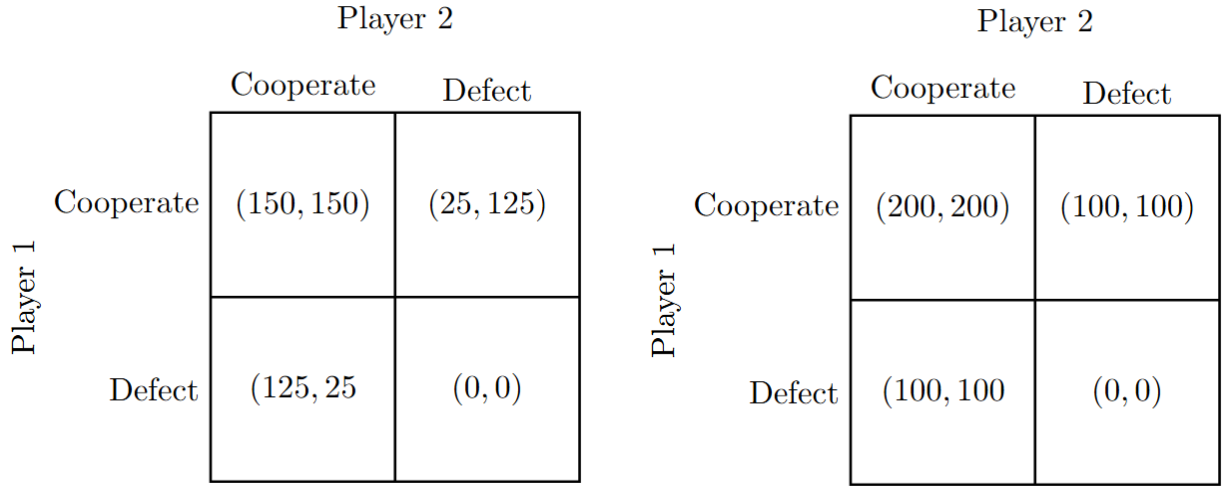

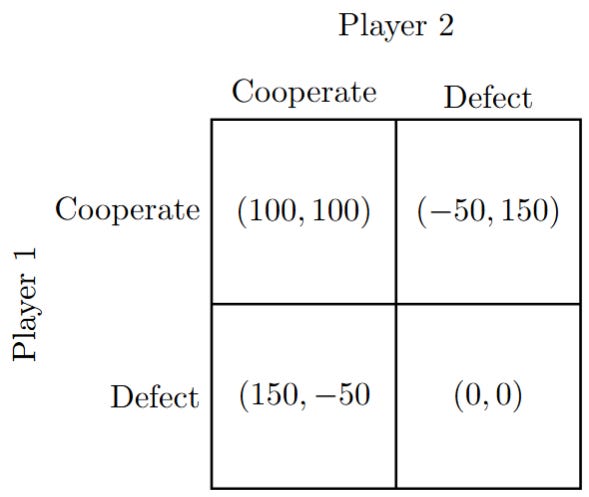

Consider the Prisoner’s Dilemma matrix of payoffs below. For each pair of actions, the cell contains the payoffs of Player 1 and Player 2 (in that order). The Nash equilibrium is the situation where both defect. It leads to a situation that is not “socially optimal”.

The insight from the Folk Theorem is simple: when play is indefinitely repeated (repeated without a clear end in sight), then players can do better than the Nash equilibrium of the stand-alone game. They can adopt strategies of conditional cooperation of the form: I will cooperate now if the other has cooperated before. The simplest form of such a strategy is the “grim strategy”: I will cooperate if the other person has always cooperated before. If the other has ever defected, I will defect.

Now the key is that there should be no clear end of cooperation in sight. There should be a high enough probability each period that the game will be played again, and both players should care enough about the payoffs in the future (they should not be impatient).5

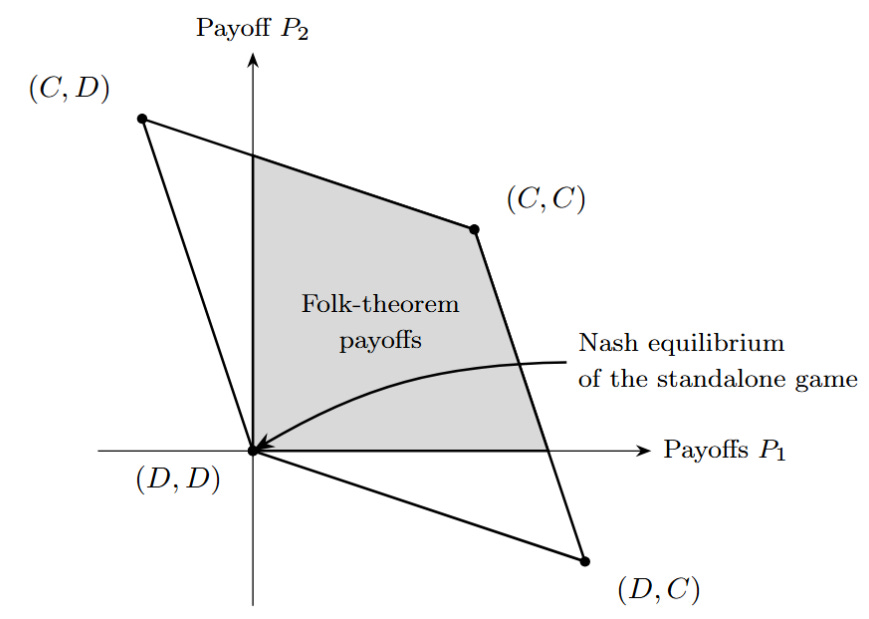

When this is the case, the Folk Theorem indicates that, via strategies like the grim strategy, a lot more average payoffs can be obtained than the payoffs of the defect-defect scenario of one-shot games. Indeed, it is possible for the players to reach all the average payoffs in the grey area in the graph if they are patient enough and the probability of the game repeating is large enough.

The conclusion is that high levels of cooperative payoffs can be reached in repeated interactions, even in situations where no cooperation is actually stable in single interactions, if players care enough about the future. Players can adopt conditionally cooperative strategies, and it is the conditionality of these strategies that enforces the behaviour of the players in the present.

In short, the folk theorem says that once interactions are repeated, players can adopt a shared understanding of the type “I’ll be nice as long as you are nice”. This is sustainable and individually rational (no need to assume that people are altruistic saints).

An equilibrium here takes the form of a commonly agreed rule of behaviour. This rule determines how to act in each period. A given rule leads to an average payoff in the long run. Consider, for instance, the grim strategy rule: people cooperate as long as everybody has always cooperated. This rule delivers the payoff associated with full cooperation (C,C) over the long run because nobody ever deviates.6

Defining formally cooperation

Given the framework above, we are actually in a position to formally define what cooperation is. We do not need to say that cooperation is something like being “nice” (which would require defining what “nice” is) or that it is what is socially “right” (which would require defining what “right” is). We only need to observe that when players play (C,C), they are achieving a higher payoff by deviating from the individually rational strategy of the one-shot interaction. Hence, formally, cooperation can be understood as following an equilibrium strategy in a repeated game that yields higher social payoffs than the equilibrium payoffs of the one-shot game.7 In plain English, cooperation is not adopting a short-sighted, self-centred behaviour and instead trying to work with others to do better in the long run.

Self-sustainable via built-in sanctions

Sustained cooperation is made possible by the risk of potential sanctions in the future. And this potential risk of sanctions is credible because the one that would not implement them would also be punished. One might fear that this cannot work because this logic would lead to an “infinite regress”: If Alice does not do the right thing, Bob needs to punish her, and if Bob does not punish Alice, Candice needs to punish Bob, but if Candice does not punish Bob, you need somebody, like Dan, to punish Candice, and so on. If the population is finite, there is somebody who would have to punish others without fearing to be punished himself or herself if he or she failed to do so. But as stated by Ken Binmore:

[T]he proof of the folk theorem is explicit in closing the chains of responsibility. — Binmore (1998)

Bob might punish Alice because he fears Candice might punish him, and Candice might punish Bob because she fears that Alice might punish her! The punishment is often simply the withdrawal of cooperation, the severing of ties that lead to the social isolation of the punished.

If this description seems far-fetched, consider the social dynamics of “cancellation”. People who were the target of cancellation often explain that many of their friends and colleagues reach out privately to express support but did not dare to speak publicly for fear of also being sanctioned by their association with the person being cancelled.8 People fear not only the possible negative judgements from others about what they say, but also potentially the judgment from others about whether they are displaying the correct type of judgement themselves. In a cancellation event, everybody might express publicly what they think is the publicly appropriate attitude because they fear that everybody else might punish them if they do not conform.

Game theory and evolutionary biology

The logic of the folk theorem provides a unifying explanation of the emergence of cooperation as arising from the fact that, whenever people interact indefinitely over time, they can get better by deviating from their one-period best strategy. This deviation is sustainable as a rational strategy when players adopt conditionally cooperative strategies and would sanction deviations.

This explanation of cooperation is very familiar to economists. It is, somewhat surprisingly, less familiar to evolutionary biologists and psychologists who are often unaware that the folk theorem provides a general result that underpins much of what they discuss under labels such as reciprocity, indirect reciprocity, and reputation.

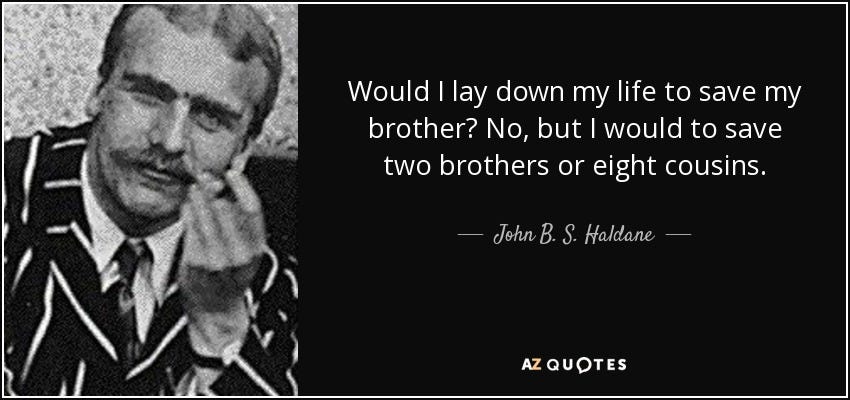

Kin selection. One important observation in animal behaviour is that cooperative behaviour often emerges between kin (e.g. parents-offspring, sisters, closely related kin groups). Here, the explanation from a game theoretic point of view is simple. Evolution selects strategies that maximise fitness at the gene level. Behaviour in one individual that promotes the survival and reproduction of the same genes in another individual will tend to be selected.

Kin share a lot of genes. Siblings share 50% of their genes, uncles and nephews, 25%, cousins 12.5%, and so on. This naturally pushes towards the selection of caring for the success of one’s kin. As the saying goes, “blood is thicker than water”.9

A simple way to think about it is that instead of caring about only your own payoff, you care also about the payoff of your kin to the degree to which you are related. As famously said by evolutionary biologist John Haldane:

Let’s take the Prisoner’s Dilemma above and let’s consider two situations: first the case where the gangsters are brothers, second, the case where the gangsters are twins. Let’s assume that the players are driven by their genes to maximise their genetic fitness. If they are simply brothers, they should care fully for their own material payoffs and care for 50% of the material payoff of their brother. If they are twins, they should both just care about the sum of their material payoffs. We can right away see that this “caring for the other payoffs” leads to the disappearance of the dilemma. Cooperation quickly becomes the best strategy in terms of evolutionary payoffs. The more related the players are, the more likely a Prisoner’s Dilemma situation turns into a trivial cooperative situation.10

Reciprocal altruism. In 1966, the evolutionary biologist George Williams published a book, Adaptation and Natural Selection, where he argued that a lot of apparently “group-beneficial” helping can, in principle, be compatible with natural selection when helping tends to be repaid inside stable social relationships.

The evolutionary biologist Robert Trivers built on this insight and published, in 1971, one of the most influential papers in evolutionary biology, The Evolution of Reciprocal Altruism. In it, he describes how conditional rules of the form “I scratch your back if you scratch mine” could naturally evolve and explain the prevalence of cooperation in the animal world.

For biologists, Trivers’ theory is the leading explanation of cooperation. Trivers’ insights are quite fascinating, and he discusses a rich texture of social behaviour which goes well beyond the result from the folk theorem in the simplified setting of the Prisoner’s Dilemma. He also grounded his arguments in compelling behavioural data about cooperation in the animal world across many different species.

However, it would be wrong to think that Williams’ and Trivers’ contributions were independent of the insights gained from game theory. Williams was aware of the discussion in game theory and specifically saw an organism’s behaviour as the best response to an environment shaped by other organisms’ behaviour. Even more tellingly, as indicated by Trivers himself, he was well aware of the literature in game theory where repeated interactions in a Prisoner’s Dilemma were being discussed and studied. He even quotes Luce and Raiffa’s book, where they had suggested that cooperation could emerge as a “fragile quasi-equilibrium”.

The relationship between two individuals repeatedly exposed to symmetrical reciprocal situations is exactly analogous to what game theorists call the Prisoner’s Dilemma (Luce and Raiffa, 1957). — Trivers (1971)

One can therefore see game theorists’ and Trivers’ contributions as complementary. The Folk Theorem provides the formal proof that cooperation is an individually rational strategy, which could therefore be sustainable in the long run, provided players can adopt appropriate rules of cooperation. Trivers made a compelling case about how such behaviour can emerge from evolution, how it is actually widely present in the animal world, and the type of mechanisms that ensure its sustainability.

Indirect reciprocity. In 1987, the evolutionary biologist Richard Alexander published the influential book The Biology of Moral Systems, where he argued that the one-to-one type of reciprocity from reciprocal altruism and repeated Prisoner's Dilemmas could not explain cooperation in large-scale society. Instead, he proposed the term indirect reciprocity to characterise the fact that we don’t only cooperate with people who have cooperated with us in the past but with people who also have cooperated with others.

Independently of this development in evolutionary biology, game theorists extended Folk Theorem results to interactions in large populations where people are rematched with others each period. In such a setting, cooperation can be maintained by keeping a public record of each person’s past cooperative behaviour and by making everybody’s behaviour conditional on these public records. An equivalent of the grim strategy in that context is that if one person ever defects, nobody ever cooperates with him again.11 Here again, strategies of conditional cooperation, like the grim strategy, can lead to cooperation being an equilibrium. Everyone cooperates with those who have cooperated in the past and everyone cares about their public record to be able to benefit from future gains from cooperation. These results provide a formal proof that the type of cooperation described by Alexander can be an equilibrium and therefore socially stable. 9

These extensions of the Folk Theorem to cooperation in large populations stress the importance of reputation: it is our public record as a good member of the community. It determines our future opportunities to benefit from cooperation in interactions with other people. Everybody cooperates with everybody having a good reputation because it is the best thing to do. Honesty is the best policy because a loss of reputation comes with substantial costs in the form of a loss of opportunities to cooperate with others in the future. For that reason, we care a lot about our reputation. It is built slowly and lost quickly.

Partner choice. In real life, we indeed choose, to a large extent, who our friends, collaborators, exchange partners, and so on are. Roberts (1998) and Baumard, André and Sperber (2013) developed an evolutionary account of cooperation that captures this fact. They made the case that understanding the logic of cooperation requires more than understanding what happens within a relationship; it requires understanding how relationships form in the first place. A lot of what people do is identify good partners and try to be selected as partners by others. Building a good reputation is key in that view, not just to convince our current partners to cooperate with us, but to find good partners in the first place.

The Folk Theorem has also been extended to such a setting.12 In that case, cooperation can be sustained by severing ties with defectors and refusing to match with them, and in some environments, leaving them unmatched. In short, we can shun people who have not proved to be cooperators. This helps explain why so much human activity involves gossip, that is, exchanging information about other people's good and bad deeds. While gossip has a bad name, its prevalence reflects the importance of forming good judgements about others to help us make good decisions when considering them as potential partners.

Implications

The Folk Theorem as a fundamental explanation of how and why cooperation emerges in evolution

The insight of the folk theorem is quite deep: opportunities for mutually beneficial cooperation naturally emerge when players face the prospect of interacting repeatedly over an indefinite period of time.

In a sense, the gains from cooperation are up for the taking. Purely self-centred agents who stumble on rules of conditional cooperation, or who are smart enough to devise such rules, will greatly benefit from working together.

The folk theorem captures the general strategic logic underlying most non-kin explanations of cooperation. The main genuinely distinct alternative is kin selection, where cooperation can be favoured even without the strategic enforcement logic of repeated interaction because genetic relatedness partly aligns evolutionary interests.13

There are endless ways to cooperate, hence culture matters

The folk theorem has two major implications. The first one is that cooperation is possible and can be individually rational. The second one is that cooperation can take many, many forms. As shown with the graph of the average payoffs reachable with the logic of the folk theorem, there is an infinite number of possibilities. More importantly, there are typically many ways to secure a given equilibrium payoff. That is, for every point in the shaded area, there are several rules of behaviour which will deliver that average payoff.

This result has been received with quite a bit of despair by game theorists. Having a unique equilibrium in a game allows game theorists to make a “prediction” about how rational players would play that game. Having an infinite number of equilibria means that the model does not narrow down a specific prediction. It is seen as a weakness of a game-theoretic model.

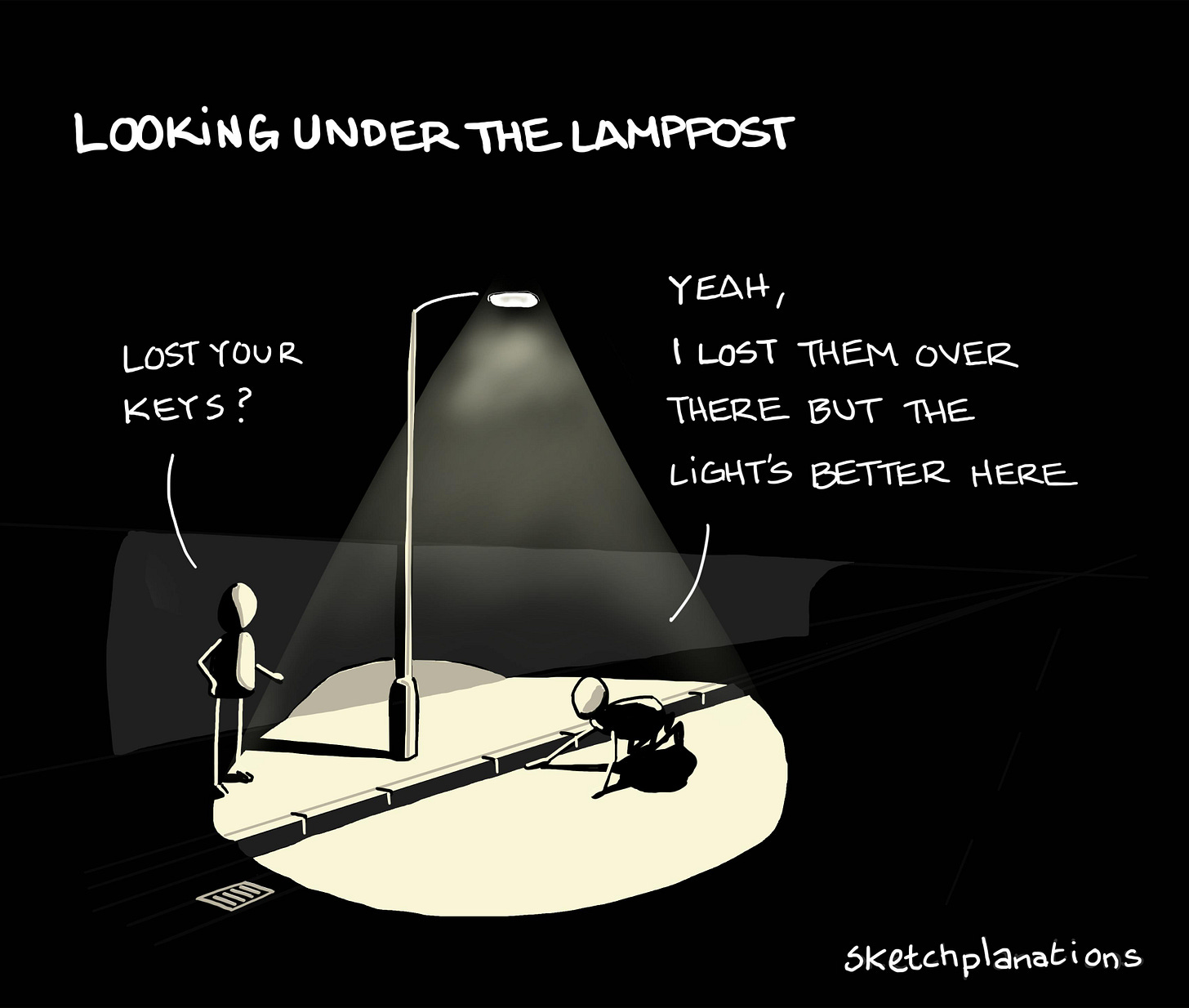

The answer in the profession has been to largely look away from repeated games. Instead, influenced in part by the rise of behavioural economics and the inclusion of psychology in economics, economists have looked at the behaviour of players in one-shot games. As players typically do not behave as purely self-interested and motivated by material interest, looking at one-shot games has provided the opportunity for a large literature to emerge on social preferences.

But this methodological move, understandable given the logic of the sociology of science, is most certainly misguided. Whatever social preferences we have, they must, in all likelihood, have been selected for us to successfully navigate situations of repeated interactions. In repeated interactions, our social preferences are useful for finding cooperators, being fair, and avoiding being seen as a defector. These social preferences likely drive people’s behaviour in one-shot experiments because one-shot interactions are unusual and people naturally use their built-in cognitive tools to try to make sense of these situations and decide how to act. By focusing on studying “social preferences” in one-shot games, economists have focused on simulacra: the effects of preferences forged to play in repeated games when activated in unusual situations where interactions are one-shot (and typically anonymous).

While this literature has produced a tremendous quantity of papers, it has likely been a wrong turn in terms of strategy for making sense of cooperative preferences driven by the desire of economists to say “something” in games with one or only a few equilibria.

A classic joke in economics recounts the tale of an economist looking for his keys under a lamppost. When someone asks where he lost them, he answers that he lost them quite a bit further away, but that it is only under the lamppost that there is light to look at the ground. Similarly, the fact that one-shot games often have one or very few equilibria made these games a preferred setting for the investigation of cooperative preferences even though the real explanations of these preferences lie in a very different area, the area of repeated games.

The fact that there are countless possible ways to cooperate should have led economists down another path, the path of understanding how cooperative equilibria are selected within a given society. This path requires thinking of how different cultural norms of cooperation evolve in societies. It is a path taken by a few economists whose influence in the discipline should be much more important: Robert Sugden, Christina Bicchieri and Ken Binmore.14

The game theory of cooperation explains the key characteristics of human cooperation: what it is and how it works. It provides a key insight: cooperation arises even between people who might have conflicting incentives because people can often produce higher total payoffs by working together. If they interact over time, they can design rules for conditional cooperation that allow them to produce these higher payoffs and to share them.

Seen in that light, there is nothing mysterious about cooperation. There is no need to assume that people are altruistic in a way that would conflict with the laws of evolution. Our moral sense, with its strong emphasis on reciprocity and conditional cooperation, is designed to navigate precisely these types of situations where gains can be obtained from cooperation if it is mutually enforced by agents playing nice as long as others do.

References

Alexander, R.D. (1987) The Biology of Moral Systems. New York: Aldine de Gruyter.

Aumann, R.J. (1959) ‘Acceptable Points in General Cooperative n-Person Games’, in Luce, R.D. and Tucker, A.W. (eds.) Contributions to the Theory of Games IV (Annals of Mathematics Studies, 40). Princeton, NJ: Princeton University Press, pp. 287–324.

Axelrod, R. (1984) The Evolution of Cooperation. New York: Basic Books.

Baumard, N., André, J-B. and Sperber, D. (2013) A mutualistic approach to morality: The evolution of fairness by partner choice. Behavioral and Brain Sciences, 36(1), pp. 59–78

Bicchieri, C. (2006) The Grammar of Society: The Nature and Dynamics of Social Norms. Cambridge: Cambridge University Press.

Binmore, K.G. (1994) Game Theory and the Social Contract. Vol. 1, Playing Fair. Cambridge, MA: MIT Press.

Binmore, K.G. (1998) Game Theory and the Social Contract. Vol. 2, Just Playing. Cambridge, MA: MIT Press.

Darwin, C. (1871) The Descent of Man, and Selection in Relation to Sex. London: John Murray.

Dawkins, R. (1976) The Selfish Gene. Oxford: Oxford University Press.

Ellison, G. (1994) ‘Cooperation in the Prisoner’s Dilemma with Anonymous Random Matching’, The Review of Economic Studies, 61(3), pp. 567–588.

Flood, M.M. (1952) Some Experimental Games. Research Memorandum RM-789-1. Santa Monica, CA: The RAND Corporation.

Gintis, H. (2006) ‘Behavioral Ethics Meets Natural Justice’, Politics, Philosophy & Economics, 5(1), pp. 5–32.

Kandori, M. (1992) ‘Social Norms and Community Enforcement’, The Review of Economic Studies, 59(1), pp. 63–80.

Laclau, M. (2012) ‘A Folk Theorem for Repeated Games Played on a Network’, Games and Economic Behavior, 76(2), pp. 711–737.

Luce, R.D. and Raiffa, H. (1957) Games and Decisions: Introduction and Critical Survey. New York: John Wiley & Sons.

Roberts, G. (1998) Competitive altruism: From reciprocity to the handicap principle. Proceedings of the Royal Society B: Biological Sciences, 265(1394), pp. 427–431.

Schumacher, H. (2015) ‘On Repeated Games with Endogenous Matching Decision’, Journal of Institutional and Theoretical Economics (JITE), 171(3), pp. 544–564.

Sugden, R. (1986) The Economics of Rights, Cooperation and Welfare. Oxford: Basil Blackwell.

Trivers, R.L. (1971) ‘The Evolution of Reciprocal Altruism’, The Quarterly Review of Biology, 46(1), pp. 35–57.

Williams, G.C. (1966) Adaptation and Natural Selection: A Critique of Some Current Evolutionary Thought. Princeton, NJ: Princeton University Press.

The famous “two prisoners” story and the name are usually credited to Albert Tucker, who used it to popularise the payoff structure.

For readers passionate about game theory, here is the fascinating answer from John Nash in full:

The flaw in this experiment as a test of equilibrium point theory is that the experiment really amounts to having the players play one large multimove game. One cannot just as well think of the thing as a sequence of independent games as one can in zero-sum cases. There is much too much interaction, which is obvious in the results of the experiment.

Viewing it as a multimove game a strategy is a complete program of action, including reactions to what the other player has done. In this view it is still true the only real absolute equilibrium point is for A always to play 2, B always 1.

However, the strategies: A plays 1 till B plays 1, then 2 ever after, B plays 2 'till A plays 2, then 1 ever after, are very nearly at equilibrium and in a game with an indeterminate stop point or an infinite game with interest on utility it is an equilibrium point.

Since 100 trials are so long that the Hangman's paradox cannot possibly be well reasoned through on it, it's fairly clear that one should expect an approximation to this behavior which is most appropriate for indeterminate end games with a little flurry of aggressiveness at the end and perhaps a few sallies, to test the opponent's mettle during the game.

It is really striking, however, how inefficient AA and JW were in obtaining the rewards. One would have thought them more rational.

If this experiment were conducted with various different players rotating the competition and with no information given to a player of what choices the others have been making until the end of all the trials, then the experimental results would have been quite different, for this modification of procedure would remove the interaction between the trials. — Nash (cited by Flood and Dresher 1952)

I put in bold a key part of Nash’s answer where he already lays out the result of the “Folk Theorem” then, illustrating that this fact was well understood by game theorists prior to Aumann’s publication of the first formal proof of it in 1959.

The full extract describes the logic very well (note that I replaced the formal labels of strategies using Greek letters in the original with “cooperate” and “defect” for readability):

Let us suppose that the players have in one way or another arrived at a pattern of selecting (cooperate, cooperate). Since player 1 then has reason to suppose that his opponent will “probably” choose cooperate, he may be tempted to squeeze a bit more out of the next game by choosing defecting. However, he may—and probably should—anticipate that the occurrence of (defect, cooperate) will ensure player 2 defecting in the next game, in which case he is driven to play defect in that game, and so in total he will lose more than is compensated for by a defect instead of cooperating now. Thus, we may argue that his contemplation of the resulting chaos tends to keep 1 in line, and, if he is unable to reason so clearly about the future, a little experience should soon set him straight. From these arguments, we see that in the repeated game the repeated selection of (cooperate, cooperate) is in a sort of quasi-equilibrium: it is not to the advantage of either player to initiate the chaos that results from not conforming, even though the non-conforming strategy is profitable in the short run (one trial).

It is intuitively clear that this quasi-equilibrium pair is extremely unstable; any loss of ‘“faith” in one’s opponent sets up the chain which leads to loss for both players.

The idea that cooperation is fragile is also expressed by Dawkins in The Selfish Gene:

[P]acts or conspiracies based on long-term best interests teeter constantly on the brink of collapse due to treachery from within. Dawkins (1976)

In fact, because cooperation can be sustained by an equilibrium, not just a “quasi-equilibrium”, there is no reason to see it as inherently unstable or as something bound to collapse at any moment.

This expression originates from the highly influential book The Evolution of Cooperation (1984) by political scientist Robert Axelrod, who showed, via computerised competitions, that cooperative strategies do better over the long run in repeated Prisoner's Dilemma games than short-sighted selfish strategies like defecting all the time.

There should not be any clear end in sight because players would know that they would not cooperate in the last period given that it would be known to be the last period. Then, in the penultimate period, they would also not cooperate, knowing that whatever they do, they won’t cooperate in the last period. As a result, they should also not cooperate in the period before that, and so on. Following this logic to its full conclusion, they should not cooperate in any period.

Note that any point in the grey area can be reached if the players are patient enough. For example, another rule of cooperation could be to defect alternately: one period players do (D,C), the next period they do (C,D). This “cooperation” is better than doing (D,D) all the time. It gives players an average payoff of 50 each instead of 0. But it is not as good as the rule of cooperating (C,C) in each period, which gives an average payoff of 100 over time.

For game theorist readers, cooperating is following a strategy that is part of a subgame perfect equilibrium whose payoffs Pareto dominate the payoffs from all the equilibria of the stage game.

This logic is mentioned pretty often by many, if not most, people who have been cancelled or who have faced a cancellation attempt. Here are some high-profile cases:

For instance, J.K. Rowling described colleagues who publicly condemned her but then privately contacted her (directly or via third parties) to check whether they were “still friends.” Kathleen Stock, a philosopher who resigned from the University of Sussex after a campaign against her gender-critical views, described colleagues as being “privately supportive but publicly silent”. Bari Weiss, who resigned from her position as a columnist at the New York Times amid conflict over the paper’s editorial culture and political climate, wrote: “Too wise to post on Slack, they write to me privately…”

A potentially counterintuitive fact is that all humans are genetically very close: two unrelated people typically have DNA sequences that are about 99.9% identical. The “siblings share 50%” claim is therefore not about overall genetic similarity. It is about the small fraction of the genome where people actually differ. At those variable spots, if you carry a particular variant, a full sibling has roughly a 50–50 chance of carrying the same one (because you each inherit a random half of each parent’s variants). A random person is much less likely to share that specific variant, especially when it is rare. This difference is what natural selection can act on: selection responds to who is more likely than average to carry the same variant, not to the overwhelming genetic similarity that everyone already shares.

That is why the strong form of cooperation observed in social insects typically appears in the Hymenoptera family where communities are formed of sisters sharing 75% of their genetic material due to the haplodiploidy type of reproduction. This places them halfway between simple sisters and twins.

If it is possible to identify a defector with a public record, the grim strategy is to punish him specifically (Kandori, 1992). If there is no public record but it is possible to know that someone defected, then a grim strategy can be for all to stop cooperating altogether (Ellison, 1994).

See Schumacher (JITE 2015) for a folk theorem where players choose their partner each period and Laclau, M. (2012) for a version where players can have several ties each period (forming a network). A punished player sees his ties being severed, leading potentially to full ostracism.

For the evolutionary biologist reader: the notion of multilevel group selection is not really a third type of justification. It relies on the same logic (and formally identical equation) as kin selection: the idea that an altruistic action can have overly positive indirect benefits.

For game theorist readers: There have not been many developed pushbacks of Binmore’s framework, mainly because it has been influential only in a small corner of the discipline as economists turned their focus to one-shot games.

One notable exception is the review of Binmore’s book Natural Justice by the evolutionary-minded economist Herbert Gintis. Gintis was an important contributor to the literature on the evolution of cooperation and his piece is one of the rare developed pushbacks Binmore has received. For this reason, it is worth discussing it to help ascertain the foundations of Binmore’s framework.

Gintis’s central complaint is that Binmore treats the folk theorem as a reliable foundation for moral rules, when it is really a very permissive existence result. In the abstract of his review he puts it bluntly: using the folk theorem as the “analytical basis” of Natural Justice is “a mistake”, because the cooperative equilibria it delivers “lack dynamic stability in games with several players”, even if Binmore’s dependence on it is “more tactical than strategic”.

In the sorts of repeated games where the folk theorem applies, there is typically a whole continuum of cooperative equilibria. If behaviour drifts, or if players coordinate on a slightly different equilibrium, nothing in the folk theorem itself guarantees players come back to the original one. Gintis adds that even small amounts of noise can compromise these cooperative equilibria, and he stresses that the problem is not just existence but stability and selection (Gintis, 2006).

I see this criticism as driven by the evolutionary game-theoretical approach of Gintis where agents are modelled as automata following rules of behaviour and equilibria are stable patterns of these rules of behaviour. It is in that framework that “drift” matters since the system only stays in equilibrium if rules are locked in a stable pattern of interaction. If there are several stable patterns of interaction with very similar rules existing, then it is not clear why a social system would not drift around.

Binmore’s view is, however, different. He sees equilibria as ensured by common knowledge of rules by strategic agents. In Binmore’s framework there is no need to assume that agents are simple automata and common knowledge clearly creates inertia. If people expect people to expect people to expect a rule of behaviour A, then when there is a slight nudge of behaviour towards A’ (like some famous people suggest adopting A’ instead of A), the common knowledge of A will likely push against this change. In Binmore, social norms of cooperation evolve, but this evolution takes time. There is a lot of inertia in cultural evolution.

The second criticism made by Gintis is on the empirical side: the conditions that make folk-theorem cooperation work well are, in his view, not the conditions under which human cooperation evolved. He argues that discount factors were likely often low because life was risky and groups were fragile, so the “be patient and punish later” logic is weaker than the theory assumes.

On that point, I think the lives of our ancestors were short only relative to ours. Human life is much longer than the life of other animals where cooperation is observed. If anything, our social cognition ability and our ability to entertain the future and simulate how our behaviour could impact other interactions later in our lives likely increased our relevant time horizon dramatically relative to our close primate relatives.

Finally, Gintis is concerned that the folk-theorem versions with plausible stability properties rely on trigger-style breakdowns of cooperation, which may work in small groups with accurate public signals but produce low cooperation in larger groups when signals are private or noisy, and are rarely observed as the main enforcement mechanism.

If you instead try to target punishments at the defector, you hit a second-order free-rider problem: in a world of purely self-regarding agents, nobody wants to pay to punish, so you need punishments for non-punishers. Gintis argues these “second-order” punishments have poor stability properties and are “virtually never observed” in groups beyond a few people.

I am not convinced by these concerns as they heavily rely on the specific limitations of the literature on repeated games with very specific constraints. It is true that the literature has pointed out the challenges of folk-theorem-like cooperation. But these models, for instance, do not typically model agents’ beliefs. Instead, people observe past actions and adopt rules of behaviour that determine when to punish. In the real world, a substantial part of our communication is used to exchange information about other people’s merits and faults (gossip). This way, we coordinate our beliefs and facilitate the possibility of joint punishment (e.g. exclusion from the group). The anthropological evidence—about the monitoring of respect of social norms, the form of punishments and how they are decided—fits with this role of communication.

Gintis’ alternative theory is that people are “strongly reciprocal” in the sense that they are really altruistic. The practical difference with Binmore here is not as big as one might feel reading Gintis. Binmore totally allows for the presence of purely altruistic preferences selected as a good way to navigate cooperative games. These preferences are well-suited to the underlying strategic structure of the game, where it is in individuals’ interest to cooperate. Place people in repeated one-shot game situations where pure altruism does not pay, and you will progressively see an erosion of altruistic behaviour. The difference for Gintis is that truly altruistic preferences could evolve. Genes for following altruistic norms could be selected even if altruism is costly to the individual. His support for this idea comes from multilevel group selection. In that perspective, genetic traits favouring costly pro-social behaviour could spread because groups containing more cooperators would tend to outperform other groups, and the indirect benefit of altruistic acts would make them genetically beneficial for altruists themselves.

I am not convinced by multilevel selection, which seems to require rather specific intergroup dynamics to matter much, in particular, limited migration and recurrent group splitting. Binmore’s perspective, by contrast, suggests something that sounds similar but relies on a different mechanism: the cultural evolution of cooperative norms. Groups coordinate on a social equilibrium. Some equilibria are more cooperative and allow groups to reach better collective outcomes. These groups then outperform others, so cooperative norms are selected positively. But since each group is at an equilibrium, individuals are acting in ways that are optimal given that equilibrium. There is therefore no need to posit selection for intrinsically self-sacrificial behaviour. I will discuss cultural group selection in a future post.

surprised that a ctl-f for "Tit-for-Tat" came up empty

I am thrillled to see this article, game theory as developed in behavioral economics is immensely valuable for social philosophy!