Time for Some Game Theory

An introduction for the novice and for the expert

Nietzsche famously declared: "In individuals, insanity is rare; but in groups, parties, nations, and epochs, it is the rule." Indeed, to an untrained observer, social behaviour can often seem puzzling, if not outright bizarre. A myriad of social norms govern our behaviour in daily life, yet they differ across locations and through time. People partake in rituals within churches, stadiums, and even workplaces. Individuals buy the latest expensive mobile phones, labelling themselves as "techies," despite having minimal understanding of phone technology. They purchase costly bottles of wine, even when they often can't distinguish between high-end and medium-cost wines in blind tests.

In fact, these behaviours aren't bewildering; they often reflect effective ways to behave to do well in social interactions. Many surprising and mysterious aspects of social life spring from the mechanisms of strategic interactions, and game theory is the discipline that deciphers these mechanisms.

In this post, I offer an introduction to game theory. It is designed to be clear for novices and to provide further insights to those who already have some familiarity with the field.

The problem

Uncertainty is a key feature of the world we live in and the circumstances under which we must make decisions. Should you attempt to climb this mountain? Is it prudent to consume this yogurt just beyond its expiration date? Is it worth taking this apparent shortcut? In all these instances, the efficacy of your decision hinges on whether your beliefs align with reality. Do you possess sufficient skill to ascend that mountain? Is the yogurt still edible? Does the alternative route truly offer a quicker path?

The introduction of other people generates a special type of uncertainty: strategic uncertainty. Unlike inanimate objects such as a mountain, yogurt, or a path, which remain static regardless of your decisions, people can adapt. They will try to predict your decisions and adjust their actions accordingly. In response, you will attempt to forecast their thought processes, as they will yours. Whether a decision is beneficial does not solely depend on the correctness of your beliefs relative to an external reality. The uncertainty you grapple with in social interactions differs fundamentally from that associated with solitary decision-making.

Oskar Morgenstern famously illustrated the problem with a strategic conundrum faced by Sherlock Holmes in one of his oppositions to Professor Moriarty. Holmes is fleeing London and tries to avoid being caught by Moriarty. He has to decide whether to stop at one of two train stations: Canterbury or Dover.

Holmes' preference is to proceed to Dover and flee to continental Europe. However, Moriarty may predict this and apprehend him there. Anticipating this, Holmes may choose to disembark at Canterbury. Yet, foreseeing Holmes' reasoning, Moriarty may elect to wait there. Thus, foreseeing that Moriarty may attempt to apprehend him at Canterbury, Holmes could opt for Dover instead... This problem appears intractable and without a clear resolution. As Morgenstern expressed it: “there is exhibited an endless chain of reciprocally conjectural reactions and counter-reactions. This chain can never be broken by an act of knowledge” (1935).

It is straightforward to identify real-world scenarios that closely resemble the Holmes-Moriarty duel. Should Ukraine counter-attack in the South or in the East? Should a football striker aim left or right when taking a penalty kick? These situations mirror a matching penny game: you wish to undertake the action that your opponent does not anticipate, but with every step you take in your thinking, you may fear that your adversary is following your train of thought and could be one step ahead.

The solution explained simply

Game theory was born from the study of such problems and the attempt to provide a compelling answer to the question of how we ought to engage in these games. The pioneers of this field were John von Neumann, Oskar Morgenstern and John Nash.

Morgenstern was an Austrian economist, notably one of the grandsons of the German Emperor Frederick III. Between the first and second world wars, economists were trying to establish their discipline on rigorous grounds. In that context, Morgenstern’s use of the Holmes example was not trivial; for him, it served as an illustration of a key problem of economics as a science. Economists were assuming that people could form expectations about future economic activities, and that these expectations would be consistent, thereby leading markets to equilibrium. However, as the Holmes example demonstrated, an individual's expectations about other people's actions are dependent on those other people's expectations about that person's actions. As Morgenstern pointed out, such situations seem to lead to a never-ending loop of mind guessing: I think that you think that I think… and it appeared that there was no definitive way to determine the right course of action and the correct expectations in such situations.

But in 1928, von Neumann had already investigated a solution to such games.1 A mathematical genius, von Neumann made significant contributions to various fields, including pure mathematics, quantum theory, computer science, and game theory,… in addition to collaborating with Oppenheimer on the design of the atomic bomb.2 In his article, von Neumann had considered the strategies of what we could casually describe as paranoid players who consider the worst-case scenario where the other player is able to see their choice and, as a consequence, plays the best strategy against them. Such players, under the influence of this mindset, presume that each strategy they choose will lead to the most unfavourable outcome possible (among all the outcomes possible when this strategy is played). Consequently, the rational decision is to select the strategy whose worst outcome is the least damaging one. This strategy, referred to as the minimax strategy, assures the player of a minimum guaranteed payoff in the game.

What von Neumann demonstrated is that in some games, if both players employ a minimax strategy, they will converge on their respective guaranteed payoffs. These strategies can then be seen as the solution to this game. Even more significantly, if we extend the notion of strategy to include the possibility for players to randomly select their actions (a concept known as mixed strategies), then a solution will always exist for zero-sum games with two players (when one player's gain corresponds to the other's loss).3 This is von Neumann’s key finding: games like the one played by Holmes and Moriarty or penalty shots can be considered to have a solution! Players need to randomise over their possible strategies to be unpredictable.

Let's take the Holmes-Moriarty game as an example. If Holmes' sole concern is to avoid capture, and Moriarty's only objective is to apprehend Holmes—with neither party concerned about the location of the action—we can treat it as a zero-sum game.4 If Holmes is paranoid, he’ll consider the worst-case scenario when stopping in Dover (being caught) and when stopping in Canterbury (being caught). In this instance, the minimax strategy does not yield a clear solution. However, if Holmes considers a strategy consisting of randomly stopping in Canterbury or Dover with 50% probability, he then has a 50% chance of evading capture, regardless of Moriarty's decision. If Moriarty follows the same thought process, he also opts to go either to Canterbury or Dover with a 50% probability. Given these decisions, both secure a 50% chance of winning this game, and their decisions are consistent with each other. By considering the possibility of strategies involving random choices, von Neumann showed that the endless “loop of mind guessing” can be broken and the players can reach a common understanding and expectation of how to play that game.5

Von Neumann's solution held considerable relevance to Morgenstern's concerns about the problem posed by strategic interactions for economics. Following the annexation of Austria by Nazi Germany in 1938, he moved to Princeton and set out to get in touch with von Neumann who was already working there. They soon began to collaborate and, between 1941 and 1944, wrote what would become one of the most influential economic books of the 20th century: Theory of Games and Economic Behavior. In this work, they expanded upon the perspective initiated by von Neumann in 1928. The book marked a significant milestone by establishing the formal foundations of game theory.

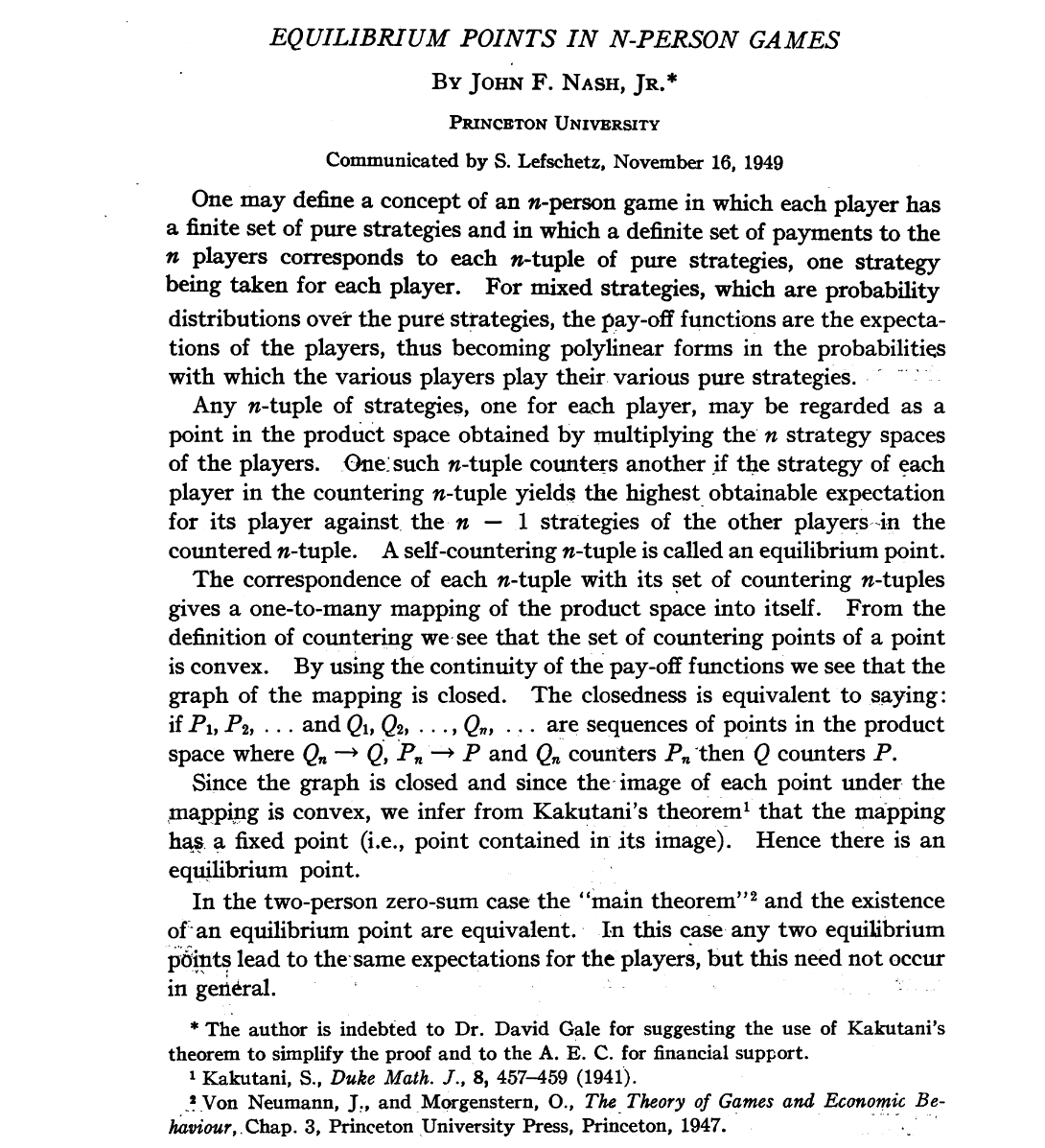

Despite its accomplishments, the book did not succeed in extending the minimax solution to games involving more than two players. In this respect, it is commonly viewed as having fallen short of providing a compelling solution for games in general. However, a few years later, a revolution in game theory was brought about by a 21-year-old mathematician from Princeton, John Nash. In a single-page (!) article, Nash proposed a new concept for a game solution: an equilibrium of the game is reached when no player has an incentive to change their strategy, given the strategies of other players.

The notion of “Nash equilibrium” is amazingly simple and Nash showed that such an equilibrium exists (possibly with randomisation like in the Sherlock Holmes scenario) for games involving any number of players and any type of payoffs. As Nash pointed out in his last paragraph, his notion of equilibrium produces the same solution as von Neumann and Morgenstern’s minimax in the two-player zero-sum game. But while von Neumann and Morgenstern had struggled to extend the minimax solution beyond two-player zero-sum games in their 600-page book, Nash’s solution could be extended to any other case!

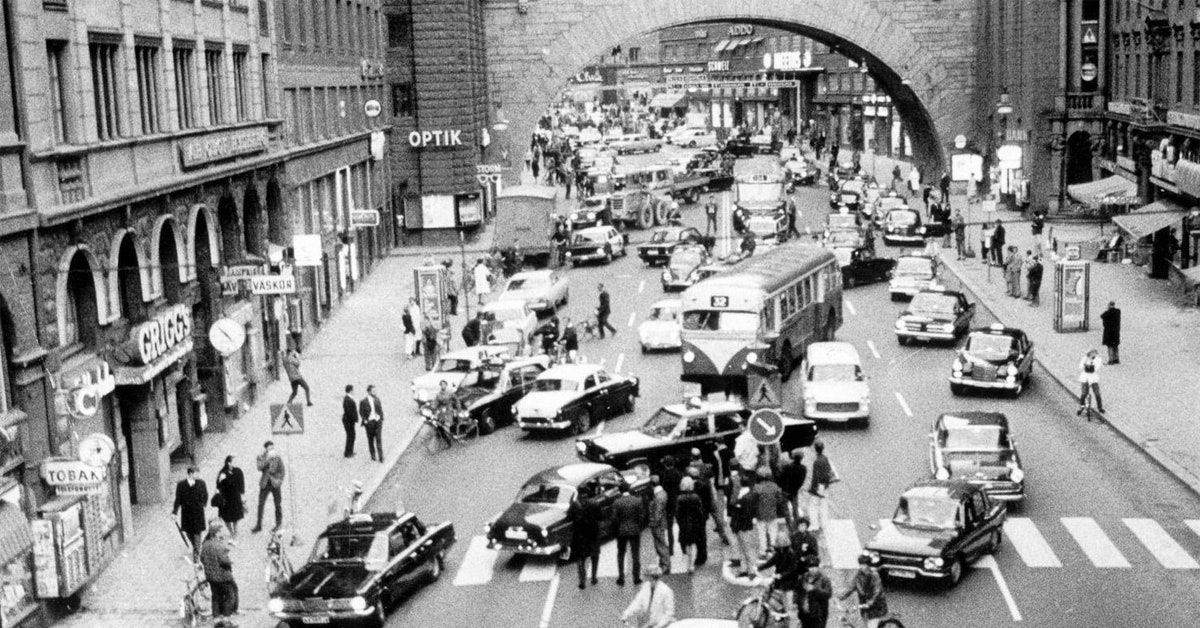

To illustrate the concept of a Nash equilibrium, consider a simple example. Every time people drive their cars, they implicitly choose a side of the road.6 In some countries, they drive on the left (e.g. UK, Australia, Japan), in others on the right (e.g. USA, Europe, China). If you live in a country where people drive on the right, it would be dangerous to drive on the left, and conversely, it is in the best interest of each person to stick to driving on the right in countries where people usually do so. Driving on one side of the road is an equilibrium in the game of driving.

To get an even better idea of what a Nash equilibrium is, we can consider strategies that are not part of such an equilibrium. An example can ironically be found in the biopic A Beautiful Mind (2001), in which Russell Crowe portrays Nash. The film attempts to explain Nash’s insight about the equilibrium of a game in a scene set in a bar. Nash and his fellow mathematician colleagues contemplate the best strategy to ask several girls to dance. Everyone is drawn to one girl in particular, a blonde. Nash, in a moment of revelation, explains to his colleagues that if they all attempt to ask the blonde girl to dance, they will undercut each other and face rejection. Subsequently, the other girls will not wish to be considered as their second choice. To avoid spoiling each other's chances, they should refrain from competing for the blonde girl's attention and instead invite the other girls to dance.

Having Nash deliver such advice would hurt the mind of any game theorist viewing the scene. It is obviously not an equilibrium. If neither Nash nor his colleagues are courting the blonde, then one of them could gain from doing so. The scenario in which nobody approaches her is not stable in that regard.7 This example facilitates our understanding of why we should anticipate rational players to gravitate towards an equilibrium in a game. If a game were not being played at an equilibrium—for example, if Nash and his colleagues were all avoiding the blonde girl—it would be rational for one of them to diverge from this scenario and approach the blonde girl.

OK I understand… No wait, what does it all mean?

All that is good, we now have an idea of how rational people would end up playing strategic games. Or, wait, do we? Have you noticed the scientific prestidigitation I have just done here (and that is routinely done in game theory introduction classes)? I discussed how rational players would play a one-off strategic situation, like Holmes and Moriarty, or Nash and his colleagues. But then, as a justification, I argued that when a game is not played at an equilibrium, players could deviate given other players’ strategies. This suggests that either this game is played repeatedly, or that players see what others are doing and can decide to shift their strategy. In essence, I've subtly introduced time into the discussion, even though I was describing games where all players must make their decisions simultaneously.

When there is no time involved—when the game is a one-off and players select their strategies simultaneously—why would rational players independently converge to playing a Nash equilibrium? They should do so if they expect others to play Nash equilibrium strategies. However, why would they make such an assumption if the game hasn't been played before and won't be played again in the future? If we can't provide a satisfactory answer to this question, in what sense is the Nash equilibrium a solution to the game considered?

Most interestingly, Nash directly addressed this question in an unpublished part of his thesis, which has been referred to as the ghost section.8 He proposed two interpretations: rationalistic and mass action. The rationalistic interpretation suggests that rational players would mentally simulate the various ways the game could unfold, discovering that only a Nash equilibrium eludes criticisms of the form: “This strategy is the best for me given what I think other players would play. But if I play this strategy, at least one other player could improve their situation by playing differently”. A variant of this explanation, proposed by Ken Binmore is that if an expert was to write a book about the best way to play games, then: “Such a great book of game theory would necessarily have to pick a Nash equilibrium as the solution of each game. Otherwise, it would be rational for at least one player to deviate from the book’s advice, which would then fail to be authoritative.” (Binmore, 2007)

This is an interesting perspective, but when faced with non-trivial strategic situations for the first time, people typically fail to play a Nash equilibrium of the game (Crawford et al, 2013). And, obviously, for most novel strategic situations people face, no book like the one suggested by Binmore exists.

The other interpretation is the “mass-action” one, whereby a game is played repeatedly in a population. Every game is played between new players, but past actions can shape expectations about what other players would do in the game. It is for instance the case of the driving game where you have to choose a side of the road every time you drive a car. In such a situation, only a Nash equilibrium would be stable as nobody would have an interest in deviating given that it is the way this game is played in society. This interpretation is more realistic and suggests that a Nash equilibrium can be expected to be played in populations used to play a given game.

It however does not explain how a population reached an equilibrium and why a specific Nash equilibrium is played (there are often many possible equilibria). This interpretation therefore paves the way for an investigation of the social dynamics that leads populations to coordinate on some solutions of games. This investigation is the object of evolutionary game theory. It is also the object of the study of the evolution of economic institutions and of cultural and social norms, all seen as Nash equilibria of large social games. This perspective is addressed in a rich body of literature where biology, culture and history are all relevant to understanding human behaviour.

Games are everywhere

Describing games of strategic interactions, von Neumann pointed out in his 1928 article that “there is hardly a situation in daily life into which this problem does not enter”. Indeed, while game theory is often taught with a few simple games, games are really everywhere.

When people compete for payoffs, they are in competitive games such as when firms race for innovations, employees compete for promotions or countries fight for resources. Sometimes the competitors have a very good understanding of each others’ motivations, strengths and weaknesses. In that case, we speak of games with complete information, and players do not learn anything from observing the game being played. However, more often than not, people possess incomplete information about their competitors. This leads to complex dynamics where players learn from past actions. It also introduces additional layers of strategy, as players attempt to influence each other's beliefs through tactics such as signalling, bluffing, or even sandbagging, where one pretends to be weak to deceive an opponent.

In other instances, players have similar incentives and want to cooperate. However, the effectiveness of their cooperation often hinges on their ability to coordinate. For instance, in the driving game, drivers are largely indifferent to which side of the road they use, their primary concern being the avoidance of accidents. Finally, many situations involve games that contain both competitive and cooperative elements. A good example is bargaining situations, where players want to reach a deal (cooperation), yet their preferences regarding the specifics of the deal may diverge (competition). Thomas Schelling (1960) called this class of games “mixed-motive games”.

Competition, cooperation, and coordination: These considerations permeate all aspects of our social lives from small interactions with our friends and partners to large ones between organisations and countries. As we navigate these interactions, we constantly strive to anticipate what others desire and how they perceive our intentions, basing our inferences on past experiences. With this understanding, game theory is not merely an abstract mathematical construct, but the grammar of social interactions.

This post is the first of a series of posts on the power of game theory to explain the patterns of behaviour we observe in the real world. In incoming posts, I will show how the insights from game theory can help explain the seemingly complex and sometimes puzzling aspects of social behaviour.

References

Basu, K., 2018. The republic of beliefs: A new approach to law and economics. Princeton University Press.

Binmore, K., 2005. Natural justice. Oxford University Press.

Bhattacharya, A., 2021. The man from the future: The visionary life of John von Neumann. Penguin UK.

Binmore, K., 2007. Game theory: a very short introduction. OUP Oxford.

Borel, E., 1921. La théorie du jeu et les équations intégrales à noyau symétrique. Comptes rendus de l’Academie des Sciences, 173:1304-1308.

Crawford, V.P., Costa-Gomes, M.A. and Iriberri, N., 2013. Structural models of nonequilibrium strategic thinking: Theory, evidence, and applications. Journal of Economic Literature, 51(1), pp.5-62.

Fukuyama, F., 2011. The origins of political order: From prehuman times to the French Revolution. Farrar, Straus and Giroux.

Giocoli, N., 2003. Modeling rational agents: From interwar economics to early modern game theory. Edward Elgar Publishing.

Hoffman, M. and Yoeli, E., 2022. Hidden Games: The surprising power of game theory to explain irrational human behavior. Basic Books.

Morgenstern, O., 1935. Perfect foresight and economic equilibrium. Selected economic writings of Oskar Morgenstern, pp.169-83.

Nash Jr, J.F., 1950. Equilibrium points in n-person games. Proceedings of the National Academy of Sciences, 36(1), pp.48-49.

Nietzsche, F.W., 1886. Beyond good and evil.

Richerson, P.J. and Boyd, R., 2008. Not by genes alone: How culture transformed human evolution. University of Chicago Press.

Sandholm, W.H., 2010. Population games and evolutionary dynamics. MIT Press.

Schelling, T.C., 1960. The Strategy of Conflict. Harvard University Press.

Smith, J.M., 1982. Evolution and the Theory of Games. Cambridge University Press.

von Neumann, J., 1959 (1928). On the theory of games of strategy. Contributions to the Theory of Games, 4, pp.13-42.

von Neumann, J. and Morgenstern, O., 1944. Theory of games and economic behavior.

Waldegrave, J., 1968 (1713). Minimax solution to a 2-person zero-sum game, reported 1713 in letter from P. de Montmore to N. Bernoulli. In Baumol, W.J. and Goldfield, S. (eds), Precursors of Mathematical Economics, 3-9. London School of Economics, London.

At least two other authors have been acknowledged for having initiated serious reflections on the solutions to games of strategy: the mathematician Emil Borel (1921) and Waldegrave (1713).

See Bhattacharya (2021)’s excellent biography.

Von Neumann’s minimax theorem states that a pair of minimax strategies exists for each player in every two-person zero-sum game with finite strategies.

The situation is akin to a penalty kick. Holmes is similar to a striker who aims to make a decision different from Moriarty’s. And Moriarty is like a goalkeeper who strives to mirror Holmes' decision.

In the actual Holmes and Moriarty game, the probabilities that Holmes should give to stop in Dover or Canterbury will depend on the value for Holmes to stop in Dover (which offers the possibility to escape to continental Europe) or Canterbury. In Theory of Games and Economic Behavior (p176-178), von Neumann and Morgenstern show the solutions for some hypothetical payoffs in the game.

You may think that people don’t choose sides as it is a legal obligation. But as you would see while watching police TV shows, people have the physical possibility to drive on the wrong side of the road, and some sometimes choose to do so.

The writers of the movie seemingly aim to suggest that Nash realises a Nash equilibrium can lead to bad social outcomes, contradicting the suggestion from Adam Smith that the pursuit of self-interest by all leads to collective wealth thanks to market mechanisms. Setting aside the fact that it is a simplistic reading of Adam Smith, the “bad” situation considered—when Nash and all of his colleagues—ask the blonde girl out is not credibly a Nash equilibrium. In that situation, any of the men would have an incentive to switch to asking out one of the other girls.

The fact that a multimillion-dollar Hollywood movie on the most famous game theorist, could not correctly depict the few seconds in the movie dedicated to his work in game theory is something to ponder about.

See the discussion in Giocoli (2003), an excellent reference on the birth of game theory.