How we decide what is fair in everyday life

Equality or total satisfaction?

In this series of posts, I am explaining what human morality is. In my previous post, I described how we can think of fairness norms as generic solutions for us to solve the daily problems of allocating rights and duties in society. Instead of haggling and disagreeing, we can often move straight to an agreement that both sides understand the other party will accept. Here, I explain how we come to agree on what is fair and what is not, following one of the most insightful perspectives proposed in recent decades on this question: the theory of the game theorist Ken Binmore. In the process, I address one of the most important questions at the heart of political philosophy: should fairness aim at equality between people, or at the greatest total satisfaction? In other words, should we think in egalitarian or utilitarian terms?

The importance of fairness as a determinant of just solutions is a key aspect of human interactions. “It’s unfair!” is one claim children learn to say very early in life. And in adult political debates, claims about social injustice play a major role.

But how do we know what such statements about fairness mean? Open one of the many textbooks in moral and political philosophy, and you will be struck by the fact that there are huge debates between highly respectable and knowledgeable scholars about what makes something fair. Not only can we not assume that toddlers have an intellectual grasp of the issues raised in these textbooks, but most adults who discuss social justice would also struggle to define what they mean by the term.

Even though toddlers are no political philosophers, their claims about unfairness often work. Parents and sometimes even other siblings frequently recognise their claim as valid and undertake to remedy the situation. In other cases, parents will disagree and sometimes convince the toddler that the claim was unfounded.

But what is happening in such situations? How do we end up agreeing on a view that something is “fair” or “unfair”? This question is relevant to all the situations in which we have to allocate rights and duties. Whether this allocation is discussed openly or whether we agree on it without debate, we are guided by our sense of fairness: what is right and what is likely to be perceived as right by other people.

Even when fairness norms are not expressly mentioned, they are nonetheless in the background, shaping our views and decisions. When we cut a cake at a birthday party, we do not need to say, “I am cutting slices evenly to be fair”, but we do it anyway, and children would be quick to point out any deviation.

Situations in which rights and duties have to be allocated are pervasive. Who should have the last cookie in the jar? Who should be promoted at work? Who should be the first author on an academic paper? Who should give way in a narrow corridor? How much tax should rich people pay?

Our interests conflict, at least somewhat, whenever we have to allocate rights and duties. We want more rights and fewer duties. It is therefore striking that our social interactions involve relatively little friction in the form of open disagreement and haggling. Indeed, we are often averse to open haggling, which can feel adversarial. The absence of haggling is a kind of puzzle. How do we constantly and easily manage to solve these situations and agree on a commonly acceptable compromise?

Despite major progress in the behavioural sciences over recent decades, social scientists do not share a unified account of how people converge on specific fairness judgements across contexts, and the prevailing answers remain partial and incomplete. Thirty years ago, however, the game theorist Ken Binmore proposed a theory that is strikingly elegant and offers a compelling explanation of what fairness is and how we make fairness judgements. Unlike many theories in the social and behavioural sciences, Binmore does not build his theory from scratch. He builds on the accumulated knowledge generated by decades of work in game theory and evolutionary theory. On a topic that is quite treacherous, the rigour of game theory gives Binmore’s theory a rare clarity. I present here his theory about how we make judgements such as “it’s unfair”.

Fairness problems are everywhere

Problems about how to allocate rights and duties, resources and costs in human groups are pervasive. Their omnipresence reflects the fact that human sociality is based on cooperation. Successful cooperation requires agreement on who does what, and the benefits from cooperation naturally raise the question of who gets what.

Humans manage such allocation problems seamlessly all the time without thinking about them. So it is surprising that, when we ask “what is fair?”, the answer does not seem obvious. Equality is often mentioned, but equality is not a simple answer when people differ in their contributions, talents and needs. The term equity is sometimes preferred, but, in truth, people who use it often have only a vague idea of what the notion means in philosophy.

In the confusing maze of competing explanations and half-baked theories based on intuitions, Binmore offers a striking clarity. His naturalistic approach does not rely on metaphysical assumptions about invisible principles of morality out there. It builds from the ground up. It starts from a rigorous understanding of human interactions, of the challenges and opportunities we face, and of the stable solutions that can emerge to solve the former and seize the latter.

In my previous post, I explained how, in that framework, fairness norms are social conventions commonly agreed within a group that solve, quickly and with minimal friction, the questions of rights and duties, resources and costs.

This answer provides a radically new insight into fairness compared with the way it is discussed in society and in philosophy textbooks. It opens a path to strikingly simple and clear answers to questions that often seem like intractable metaphysical puzzles about what is right and wrong. In this post, I explain how Binmore accounts for what we come to find fair in a given social setting. To make his general theory more concrete, I begin with two familiar kinds of fairness problems.

Alice and Bob

Alice and Bob live together. They have to solve common household problems about how to share the chores needed to keep the house functional: who does the dishwashing and the laundry? Who puts the bin out? Who mows the lawn? What is a fair allocation of chores? Is it 50–50 for each chore? Across chores? What if Alice enjoys cooking more, and Bob enjoys mowing the lawn more? How should that be taken into account when deciding what is “fair”?

They also have to solve the problem of sharing the benefits of being together. For instance, they like spending the evening together watching television. But they have different preferences about what to watch. Alice prefers crime dramas, and Bob prefers sci-fi series. What is a fair way for them to share viewing time? Should it be 50–50, alternating every day or every week? What if Alice is a big fan of crime dramas while Bob only slightly prefers sci-fi series? Should they still split viewing time evenly? Would it be “fair” for Bob to ask for that?

Binmore provides a general treatment of such problems. In this post, I focus on the case in which Alice and Bob have equal bargaining power. Neither has the ability to push his or her own interest more than the other. In that case, what would they agree is a fair solution to the problems they face?

Utilitarianism and egalitarianism

Utilitarianism: maximise the sum of happiness

Utilitarianism is a moral and political philosophy that relies on a form of equality: everyone’s happiness matters equally. From that position, utilitarians argue that the right thing to do when making collective decisions is to aim for the greatest total happiness. Jeremy Bentham famously summarised this doctrine in these words:

It is the greatest happiness of the greatest number that is the measure of right and wrong. — Bentham

If Alice and Bob were utilitarians in their choice of television programme, they would choose the solution that maximises the sum of their satisfaction. Given that Alice enjoys crime dramas more than Bob enjoys science fiction series, they would end up watching crime dramas all the time.1

Egalitarianism: improve the fate of the person who is worse off

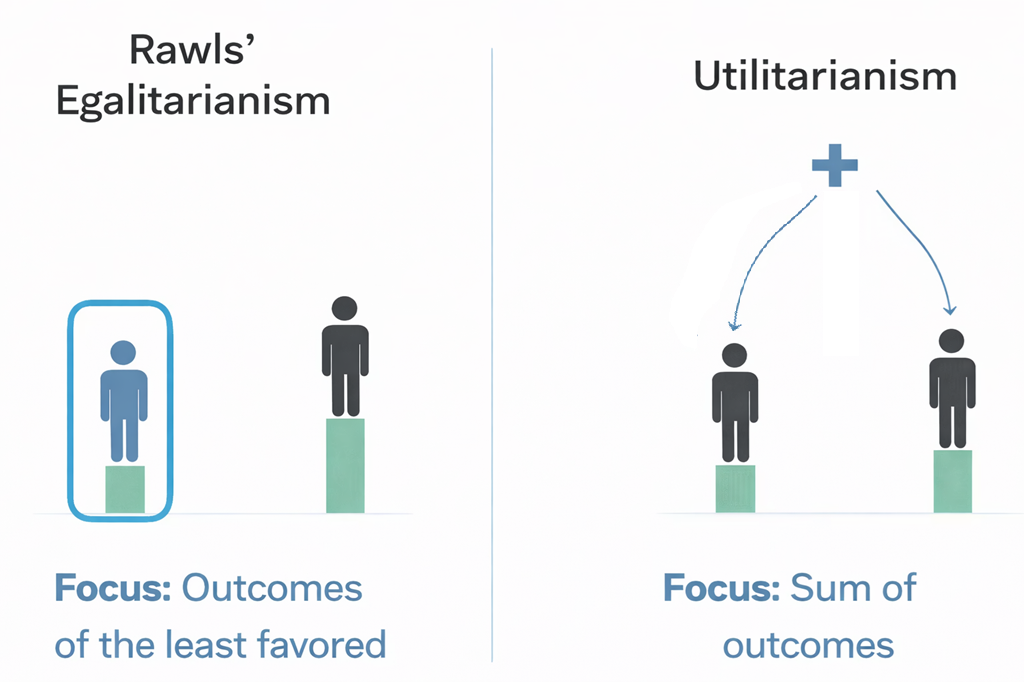

One of the landmarks of twentieth-century political philosophy was John Rawls’s A Theory of Justice, published in 1971. In it, Rawls opposes utilitarianism. He argues that it can lead to undesirable outcomes, such as the sacrifice of some individuals’ basic rights for the greater happiness of the many. In our example, Bob would have to accept never watching sci-fi series in order to maximise total happiness. Such a solution may sit uneasily with our intuitions about fairness.

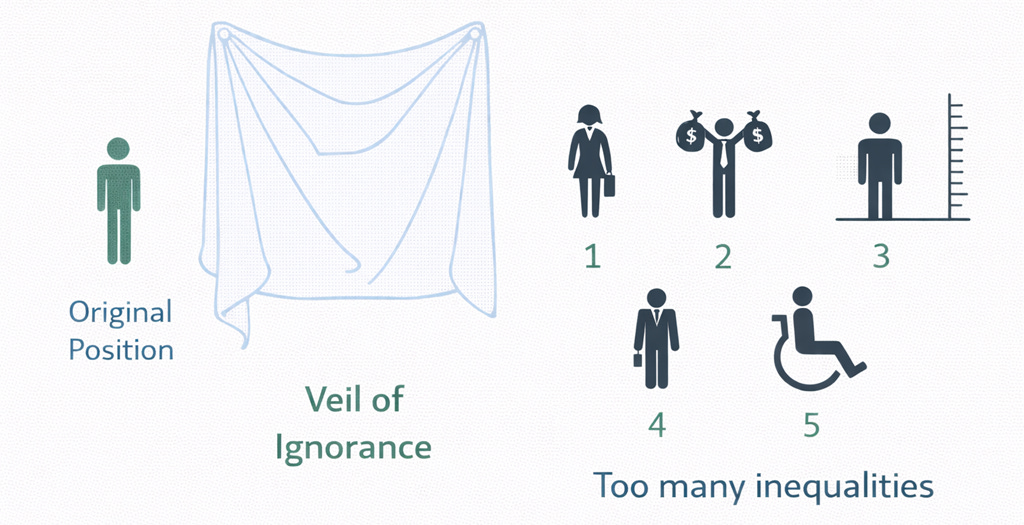

In place of utilitarianism, Rawls suggests another approach. To determine what social agreement is fair, we should imagine what it would be like to be other people and to experience things as they do. He calls this thought experiment the veil of ignorance: we imagine ourselves as ignorant of our own identity, looking at a social situation while knowing that we could turn out to be any of the people involved. Rawls calls the fictitious standpoint created by this thought experiment the original position. It is from this original position that we should form a view about whether we like a social situation or not. What this thought experiment forces us to do is to consider equally the fate of everybody involved because, under the veil of ignorance, we would not know who we are in that situation.

Rawls argues that under the veil of ignorance we would choose to organise the social situation so as to focus on the benefits of the most disadvantaged person. With this conclusion, Rawls supports egalitarianism.2

Ken Binmore’s theory about how we make fairness judgements

How do we solve these problems in practice? What do we do when we assess whether a proposed solution is “fair” or not? Binmore thinks that Rawls is right to use the veil of ignorance. In fact, unlike Rawls, who sees it as something we ought to do in order to find the right solution, Binmore thinks it captures, in stylised form, something close to what we do all the time. Even though we may not think in such abstract terms, we put ourselves in other people’s shoes to see a given problem from their point of view and to look for a solution they could accept.

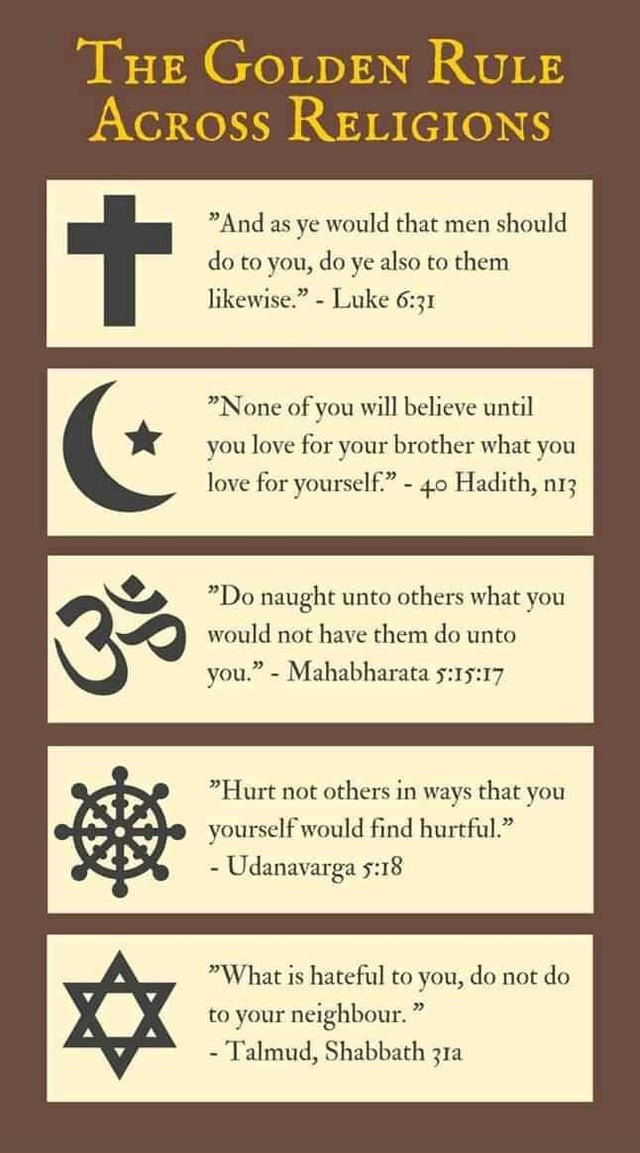

In fact, doing so is very intuitive to us. It amounts to following the Golden Rule.

The Golden Rule

The Golden Rule is one of the most widely shared principles of morality across human societies. Its presence across different continents and widely different faiths suggests that it was, in all likelihood, codified independently in different places. A simple version of the Golden Rule is:

Do unto others as you would like to be done unto you.

This is not just a lofty moral principle enshrined in religious texts. It is also a principle frequently used to teach children how to behave properly. Alice, learning that her toddler has hit another child, might say:

Imagine you were her. Would you be happy with what you have done? No. So you should not do that to her.

Binmore sees the Golden Rule as a cognitive device that humans evolved to help them agree on how to treat each other whenever they have to allocate rights and duties, benefits and costs of cooperation. The Golden Rule asks us to step into the shoes of others and take their point of view when deciding how to treat them. It may have emerged naturally as a way to simulate bargaining in our heads and anticipate what other people are likely to accept and what they are likely to reject.

For that reason, Binmore sees the veil of ignorance as an elaborate version of the Golden Rule that we use in everyday life to solve the many situations in which we have to agree on how to interact with other people and how to share the gains from cooperation.3

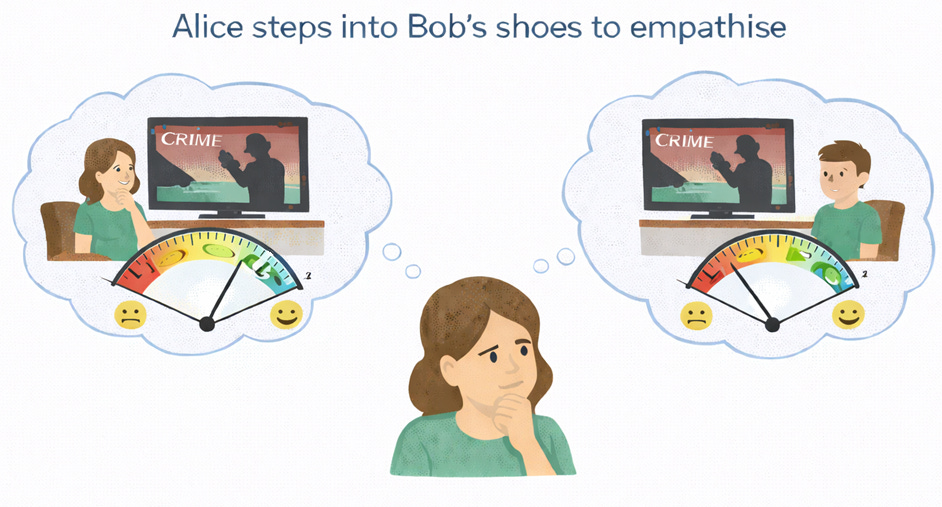

Put very simply, what we do is to place ourselves in the shoes of the people with whom we are interacting. We see the situation from their point of view. They do the same, and this process allows us to converge on shared agreements. When Alice thinks about what the fair solution is, it is as if she were placing herself in Bob’s shoes and seeing the situation from his point of view. She can then consider each option both from her own point of view and from Bob’s.4

Empathetic preferences

To put ourselves in the shoes of other people as a way of identifying what is fair, we need to be able to compare how happy we would be if we were different people in different scenarios.

In other words, Alice needs to be able to compare her and Bob’s satisfaction with different possible agreements. For Alice to appreciate that Bob may not be as happy as she would be watching crime dramas, she needs to be able to say something like “I enjoy crime dramas more than Bob enjoys them”.5

Binmore relies on the work of the game theorist John Harsanyi to give such statements formal meaning. Harsanyi formalised the idea of people having preferences not only over their own situation but also over how it compares with how others experience their situations. Binmore calls such preferences, when you put yourself in the shoes of others, empathetic preferences.

I explain Harsanyi’s theory in more detail in an appendix at the end of this post.

When Alice puts herself in Bob’s shoes while he is watching a sci-fi series, she must imagine whether Bob would be as happy in that situation as she would be watching crime dramas.6 Now, imagining the different solutions for sharing viewing time from her own point of view and Bob’s, she can choose the solution that is going to be amenable to both of them.

Binmore sees in Rawls’ veil of ignorance a way to describe this thought process where Alice gives equal consideration to her own and to Bob’s perspective. Prior to Rawls’ book, Harsanyi had already developed a moral theory based on exactly the idea of the veil of ignorance. In 1955, he described what he called a “special impartial and impersonal attitude” where someone considers

what social situation he would choose if he did not know what his personal position would be in the new situation choosen (and in any of its alternatives) but rather had an equal chance of obtaining any of the social positions'6 existing in this situation, from the highest down to the lowest. — Harsanyi (1955)

But, unlike Rawls, Harsanyi thought that under that veil of ignorance, people, not knowing who they would turn out to be, would agree on a social situation that maximised their expected well-being. In other words, Harsanyi argued that, under the veil of ignorance, we would be utilitarian. His argument would seem intuitive to any economist: if you do not know who you will be in a given situation, that situation is akin to a lottery. When you choose a lottery, you pick the one with the best expected gain.

Hence, from the same intellectual device, the veil of ignorance, Rawls and Harsanyi arrived at two different conclusions: egalitarianism and utilitarianism. Who is right?

Binmore’s Copernican move

The debates between utilitarianism and egalitarianism are complex, and the books and papers written on the topic could fill libraries. Binmore proposes a simple resolution of this debate, one that makes the issue much clearer.

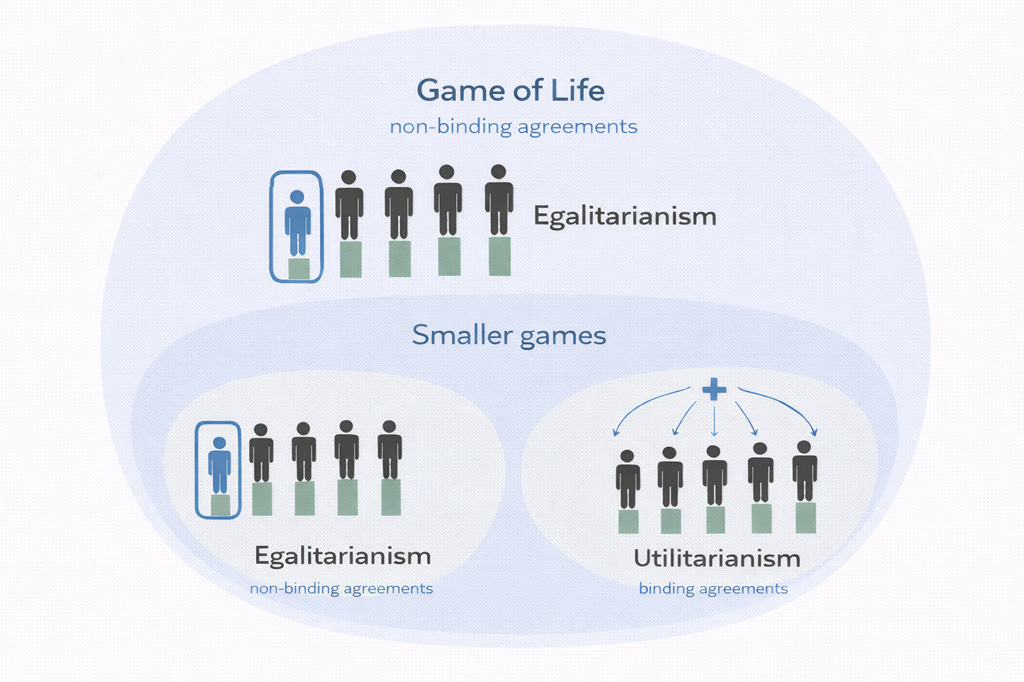

Agreements in the Game of Morals need to be self-enforcing in the Game of Life

Binmore’s solution is to see utilitarianism and egalitarianism as valid answers to two different types of bargaining problems. He points out that Harsanyi and Rawls made an implicit assumption with major consequences: that the agreement under the veil of ignorance is binding. A binding agreement is like a contract signed in front of a lawyer. If one party decides not to honour the contract, a third party can intervene and enforce its terms.

If agreements about fairness are binding, then Binmore agrees that Harsanyi would have to be right and we should be utilitarian. Behind a veil of ignorance, we would choose the situation that is, on average, the best.7

The idea that agreements behind the veil of ignorance are binding is problematic, however. We should not forget that the veil of ignorance is only a thought experiment used to find a solution together in real life. There is no actual contract signed “under the veil”. How could a solution reached under the veil be binding in the real world once we step out of that thought experiment? Both Rawls and Harsanyi assume that respecting the agreement we would reach under the veil is a moral duty.8

But that is a problematic move. Where would such principles come from? Rawls’s and Harsanyi’s theories are meant to explain how we should make moral judgements. But if they assume some moral duty a priori, they assume what they are meant to explain.

Binmore rejects such a skyhook. There is, in his view, no basis for assuming that people are somehow bound to respect a hypothetical agreement reached in a thought experiment.

There is nothing about the circumstances under which people are hypothesized as bargaining in the original position that can justify the assumption that they are bound, either morally or in practice, to abide by the terms of a hypothetical deal reached in the original position. Thus, in my theory, compliance is assumed only when compliance is in the best interests of those concerned. — Binmore (1994)

Consider, for instance, a scenario in which Bob really hates dishwashing and doing the laundry, and really likes watching television. The allocation of rights and duties that maximises total happiness in Alice and Bob’s household might be for Bob to watch television after every dinner while Alice washes the dishes and does the laundry. To justify this, Bob might say: “I really dislike washing the dishes and doing the laundry more than you do, and I enjoy watching television more than you. So this is the solution that maximises our total satisfaction, and it is what we would have agreed under the veil of ignorance.” But if Alice and Bob have equal bargaining power, nothing prevents Alice from answering that she does not care about the argument that this is a “fair” solution because, under the veil of ignorance, she might have ended up being Bob and enjoying that situation. In practice, she is Alice, and she does not like the idea of such a lopsided deal.

A key point made by Binmore is therefore that an agreement in the Game of Morals only works if it is sustainable as a bargaining agreement in the Game of Life. In other words, it needs to be renegotiation-proof. It must be the case that when Alice puts herself in Bob’s shoes to consider what would count as a possible agreement, it has to be an agreement she is actually happy to respect in the real world once she steps back into her own shoes.

This has a very important practical implication. When agreements are not binding, only egalitarian deals are sustainable and renegotiation-proof.9 In an egalitarian solution, nobody has an incentive to reopen the case by saying that fairness requires a different assignment of roles, because everybody is getting the same outcome.10

Binmore, therefore, proposes an intellectually appealing way to unify our understanding of utilitarianism and egalitarianism: utilitarianism is the reasonable answer when agreements are enforceable, egalitarianism is the answer when they are not. This helps explain why both utilitarianism and egalitarianism can, to some extent, resonate with our intuitions. It is not that one is absolutely right and the other absolutely wrong. The answer depends on the context.11

When will either utilitarianism or egalitarianism prevail?

Utilitarianism will prevail when moral agreements can be binding. In real life, binding agreements typically involve a third party, such as judges and the legal system. But, from Binmore’s perspective, there is no real third party for agreements on social contracts in the Game of Life, because all the potential enforcers are themselves part of the Game of Life.

Social contracts are not contracts in the legal sense, since there is nobody outside the Game of Life to whom one can appeal for redress if someone cheats. Everybody—up to and including the Lord High Executioner—is a player in the Game of Life. — Binmore (1998)

When thinking about binding agreements, one therefore has to think about cases in which they are binding because the structure of the game itself makes violating them unattractive. Binmore gives the example of insurance games, which may have played an important role in the evolution of morality in our ancestral past.

In an environment in which daily food intake was needed for survival, but each individual faced substantial variation in success at finding food, mutual sharing makes sense as an incentive scheme. Today I get some of your meat, tomorrow you get some of mine. Respecting the insurance contract is binding because failing to respect it would trigger sanctions, such as exclusion from future help.

To the extent that these agreements can be binding, a utilitarian solution can emerge in which meat is shared. Sharing is utilitarian because the person with food shares it for the benefit of all those who are part of the insurance agreement.

Binmore conjectures that situations of insurance were likely to be at the origin of the Golden Rule.

If the Golden Rule is understood as a simplified version of the device of the original position, I think an answer to this question can be found by asking why social animals evolved in the first place. This is generally thought to have been because food-sharing has survival value. — Binmore (2005)

This logic of insurance can be extended to any situation in which people can accept losses in some cases because, across a wide range of situations in which their needs and preferences differ, they end up better off overall. Consider a couple like Alice and Bob. On any decision about how to run their household, they have to agree on their rights and duties. They can be utilitarian to the extent that there is an overall gain for both of them across all their decisions. If Alice dislike putting the bin out and mowing the lawn Bob may be happy to do those tasks most of the time. If Alice prefers choosing the interior design, Bob may be happy for her to make most of those decisions. Not all of their bargaining problems need to be solved with a 50–50 split, because they are playing a larger game in which they can divide total gains across the household. They can therefore be utilitarian in specific situations because they are egalitarian in the larger game they play at the broader household level.

Egalitarianism will prevail when agreements cannot be binding. In many cases, agreements cannot be binding. Between two people who interact only once in one specific way, there is no larger game with other situations whose potential gains can be used to discipline an agreement in that situation. In such a case, utilitarianism will not work.

If Alice and Bob are assigned to a group project and do not expect to work with each other again, Bob’s claim that he dislikes some parts of the work and would prefer Alice to do them may fall flat. Alice may simply ask for a strict equal share as a fair solution, independently of how much each of them likes or dislikes the tasks.

Similarly, even when people interact repeatedly, if they have characteristics that would systematically bias the gains from a utilitarian solution in their favour, that solution may be rejected. Let us return to the example of Bob disliking household chores more than Alice and enjoying leisure more than she does. Here, the utilitarian solution would lead to an uneven deal at the household level. Bob might try to appeal to the veil of ignorance: “Put yourself in my shoes, I really hate dishwashing.” Alice is not bound by the fiction of the veil of ignorance, and she may simply reply, “I don’t care that you really dislike it. You still have to do it sometimes.” Alice has no interest in abiding by a utilitarian solution if it puts her at a systematic disadvantage. Utilitarianism will only be followed when, overall, it works for both.

In other words, while an agreement can be utilitarian for single situations, it is only if this utilitarianism leads to a form of egalitarianism overall because the agreement over the Game of Life itself cannot be binding.

“It’s unfair”: what does it mean?

From toddlers complaining to their parents to activists demonstrating in the streets, claims about unfairness are commonplace. What do they mean? Surprisingly, those who make these claims would often struggle to articulate a coherent conceptual framework to justify them. Yet such claims work because they are real bargaining claims that we can grasp at a deeper intuitive level.

In most situations in which moral agreements are not binding, the statement “it’s unfair” has an egalitarian basis. When Alice says “it’s unfair”, she is claiming that she is the one who is worse off in the situation and that another way of organising the interaction would improve her position. There is no reason for her to agree to a situation in which she is worse off and to let the social contract on which it is based continue unchanged. Instead, she can simply say that she disagrees with that social contract and ask for another one that improves her situation. If she has equal bargaining power, her claim cannot simply be ignored.

In some situations in which agreements are binding, the statement “it’s unfair” takes on a utilitarian meaning. It becomes a claim that, in a specific situation in which it is commonly accepted that the sum of satisfaction should be maximised, the deal was not respected. This deal works as part of a larger game that is itself constrained by a broader egalitarian balance acceptable to both sides. Overall, everybody benefits from maximising satisfaction in each specific situation. But if people refuse to respect the deal when it is your turn to benefit from it, the statement “it’s unfair” calls out this breach of the commonly accepted arrangement.

Binmore’s perspective lifts the mystery surrounding questions of fairness. It helps explain why both utilitarianism and egalitarianism can feel intuitive and have defenders. And it offers a simple reason why either may prevail in a given social setting.

For most games, and in particular for the Game of Life, there is no way to make moral agreements binding. Hence, the utilitarian solution is often not sustainable there. This is simply because nothing prevents someone from saying, “I don’t care about your moral story, I am not happy with the deal you propose.” For that reason, egalitarianism typically prevails when people have equal bargaining power.

Binmore does not reach this conclusion from metaphysical or a priori moral assumptions. He builds his framework from the ground up, on the basis of a matter-of-fact understanding of human interactions, the problems they face and the solutions they can adopt. Once one takes that approach, there is an air of inevitability to the conclusions Binmore reaches. We do not reach them because we like them, and then make metaphysical assumptions along the way to help us land on these conclusions. We reach them because they follow from a naturalistic perspective that seeks to explain morality as it is, rather than describe it as we would like it to be.

References

Binmore, K. (1994) Game Theory and the Social Contract, Volume 1: Playing Fair. Cambridge, MA: MIT Press.

Binmore, K. (1998) Game Theory and the Social Contract, Volume 2: Just Playing. Cambridge, MA: MIT Press.

Binmore, K. (2005) Natural Justice. Oxford: Oxford University Press.

Bentham, J. (1776) A Fragment on Government, Preface; in Burns, J. H. and Hart, H. L. A. (eds) (1977) A Comment on the Commentaries and A Fragment on Government. London: Athlone Press, p. 393.

Harsanyi, J.C. (1955) ‘Cardinal Welfare, Individualistic Ethics, and Interpersonal Comparisons of Utility’, Journal of Political Economy, 63(4), pp. 309–321.

Harsanyi, J.C. (1977) ‘Morality and the Theory of Rational Behavior’, Social Research, 44(4), pp. 623–656.

Rawls, J. (1971) A Theory of Justice. Cambridge, MA: Harvard University Press.

Appendix: Harsanyi’s extended preferences

In the film Crime 101, Lou (Mark Ruffalo) tells Angie (Jennifer Jason Leigh):

I like the beach much more than you do.

Such statements are easy for us to understand. But even though they are intuitive, they are not trivial. Let us ask a simple question: what does it mean to say such a thing? How can we test whether this statement is true?

For many generations of economists, such a statement would have been seen as nonsensical because we cannot compare how much Lou likes something, which is something happening in his head, to how much Angie likes it, which is also something happening in her head.

Crash course on what preferences mean

What can we say about preferences?

Let us start with a statement by Lou such as: “I like going to the beach more than I like hiking.” Economists are fine with such statements. They are meaningful because they have practical consequences. If he is repeatedly given the choice between going to the beach and going hiking, we should see Lou choosing the beach more often.

What about a slightly different statement: “I like going to the beach twice as much as I like hiking”? This now suggests a precise quantitative comparison, as if there were some quantity of subjective satisfaction in Lou’s head when he engages in an activity. He is saying: my quantity of satisfaction when I go to the beach is twice my quantity of satisfaction when I go hiking.

For most of the twentieth century, mainstream economists basically rejected such statements as unverifiable. There is no direct way to measure what is happening in Lou’s head, so such a statement appears to have no practical consequences. However, in the book that launched game theory, von Neumann and Morgenstern offered an answer to this problem. Such a statement does lead to practical predictions when we consider the willingness Lou should show to take risks for each option. If Lou says that he likes the beach twice as much as hiking, he should be indifferent between hiking for sure and a gamble that gives him a 50 per cent chance of going to the beach and a 50 per cent chance of getting nothing. More generally, if he likes the beach three times as much as hiking, the corresponding threshold would be one-third; if four times as much, one-quarter; and so on.12

Now let us appreciate that the statement “I like the beach much more than you do” introduces another level of complexity. Lou is now comparing the quantity of satisfaction in his own brain with the quantity of satisfaction in Angie’s brain. Does it make sense to do something like that? Can it lead to predictions that can be tested? Most economists would say no, because we do not have a hedonometer that we could plug into Lou’s and Angie’s heads and that would provide an absolute measure of satisfaction on a common scale.

Harsanyi proposed a way to make sense of such statements by extending choice to hypothetical choices involving a swap of places. When Lou says, “I like the beach much more than you do”, this is equivalent to saying: “I would prefer to be me, with my preferences, at the beach than to be you, with your preferences, at the beach.” Harsanyi called such preferences extended preferences. If we accept such statements as meaningful, and if we apply the von Neumann–Morgenstern result to this kind of choice, then we can represent these preferences with a utility function that allows interpersonal comparisons across people.

In other words, saying things like “I like the beach more than you do” becomes a reflection of the ability to choose between possible person-swapping experiences.

Utilitarianism is very influential in modern discourse. Peter Singer is one of the most famous utilitarian philosophers, and, on Substack, Bentham's Bulldog is known for his defence of utilitarianism.

Rawls formulated his theory for large social questions, but we can use it for smaller social situations.

Rawls sees his theory as a procedural reinterpretation of Kantian morality, which says that you should only follow rules of behaviour that you would agree others to follow. Both Kant and Rawls stated that they rejected the Golden Rule as a foundation of morality. They saw it as too subjective (don’t do to others what you would not want to be done to you). But both Rawls’ veil of ignorance and Kant’s categorical imperative require some mind-reading: stepping into other shoes and seeing social situations from their point of view. It is in that more general interpretation of the Golden Rule that Binmore sees the association between that rule and the veil of ignorance.

When this process has led to a solution commonly agreed, Alice does not need to put herself all the time in Bob's shoes, it is only when Alice and Bob are uncertain about how to agree, or when there is a disagreement that the Veil of Ignorance (stepping in the other person’s shoes) is used.

Using the veil of ignorance thought experiment, if Alice is in the original position, she needs to be able to say something like “If I end up being Alice, I will enjoy watching crime dramas more than if I end up being Bob watching sci-fi series”.

Note that it requires Alice to know Bob’s preferences. We will assume that they know each other enough for this to be common knowledge.

Note that utilitarianism allows us to care more for the most disadvantaged. Under the veil of ignorance, I might appreciate that the first few thousand dollars I could get as a poor person would be worth more to me than if I were a rich person. Hence, utilitarianism can justify caring more for the most disadvantaged people. What it does not allow is the absolute approach suggested by Rawls where only the outcomes experienced by the most disadvantaged people would matter to us.

For the economist readers: This is simply saying that agents can be risk-averse under the veil of ignorance and maximise their utility. The more risk-averse they are, the more they will choose a social contract that favours the people who are worse off in society. Rawls’ solution is akin to assuming an infinite degree of risk aversion.

For Rawls, compliance with the principles chosen behind the veil is secured by a natural duty of justice, that is, the duty to support and comply with just institutions (Rawls, 1971). For Harsanyi, it depends on a prior commitment to an impartially sympathetic humanitarian morality (Harsanyi, 1977). Both theories rely on a skyhook: an external motivational assumption not grounded in the theory itself.

This is an abridged presentation of Binmore’s framework where I zoom in on the final solution. To arrive at this conclusion, Binmore considers a fairly rich set of possible agreements in the form of contingent social contracts.

To be precise, what is equalised is not necessarily the final outcome itself, but the gains from cooperation. If Alice and Bob start the negotiation from different positions, that is, if their situations would differ in the event of disagreement, they will agree on an equal division of the gains from cooperation, these gains will be added to their initial situation prior to the agreement and this may still lead to different final outcomes.

In addition, it should be noted that, in Binmore’s framework, the egalitarian solution delivers an equal split of the gains from cooperation only when people have equal bargaining power. I’ll treat the important case of unequal bargaining power in a later post.

I focus here on agreements between people who are not a priori genetically related. One reason utilitarianism likely also resonates with our moral intuitions is that it is the logic of kin selection that leads to a form of utilitarianism. Twins should, in theory, adopt a perfectly utilitarian approach, valuing the outcome of their sibling as much as their own and therefore ignoring issues about differences in outcomes between them. Only the total success (in biological fitness) should matter.

A technical point only for economist readers: To be precise, the statement “I like the beach twice as much as I like hiking” needs to be understood relative to a reference point because von Neumann Morgenstern utility functions are unique only to a positive affine transformation. Hence, u(x) and v(x)=a+bu(x) give the same choices. If the utility of doing nothing is u(N) and v(N) then the gain of utility from going to the beach relative doing nothing is u(beach)-u(N) and the gain of utility from going hiking relative doing nothing is u(hiking)-u(N). Saying u(beach)-u(N)=2(u(hiking)-u(N)) stays valid if we replace u by v since v(beach)-v(N)=2(v(hiking)-v(N)) means a+bu(beach)-a-bu(N)=2(a+bu(hiking)-a-bu(N)) and therefore u(beach)-u(N)=2(u(hiking)-u(N)).

What this doesn't seem to address is the difference between fairness as outcomes being identical regardless of inputs and fairness as outcomes being proportional to contribution. There, very simplistically, conservatives and socialists seem to have different intuitions. I presume there was some socio-economic optimum that balanced the capable going on strike with the less capable banding up and taking from the capable and that within a group with a balance that was inter-group competitive both intuitions were evolutionarily stable in individual psychologies.

I don’t follow at all. Let me voice out my concerns (or misunderstandings?)

Fairness relates to our feelings about the costs and benefits of cooperation (or coordination). Using economics as an illustration, a fair wage is the best wage a person can get voluntarily from (theoretically) unlimited numbers of competing employers. The employment is the cooperative arrangement, with one supplying their effort and time, the other paying the salary. Similarly, a fair price for coffee is the best price I can get absent coercion.

I do not get what utilitarianism has to do with this. Nor do I get how anyone joining a team or relationship would be able to even remotely calculate long term utility across multiple players. This establishes a ridiculously hard (impossible?) to calculate standard of fairness. Nor is it (as you mention) binding. So this is a total fail in my book.

Egalitarianism is even worse. It sweeps the entire issue of contributions vs rewards under the rug. The incentives in an egalitarian system is for everyone to free ride, and contribute nothing. This totally destroys cooperation, leading to a situation where we are better going solo than cooperating in the first place.

I don’t want to go on too long, but I think fairness is better framed as the best voluntary arrangement of costs and benefits one can get among competing alternatives (as per the economics examples). A great example of fairness is revealed when we look back at voluntary agreements among actual pirates. But that will take us too far…